These tools and metrics are designed to help AI actors develop and use trustworthy AI systems and applications that respect human rights and are fair, transparent, explainable, robust, secure and safe.

Aival Analysis Lab

Independent Quality Assurance for AI in healthcare. The Aival Analysis Lab is a suite of software solutions to streamline and standardise AI assessment. The software allows clinical users to independently and rigorously analyse an AI system, giving them the confidence they need to know that it will work at their local site and for their patients, before procurement and through use.

Evaluate and Compare

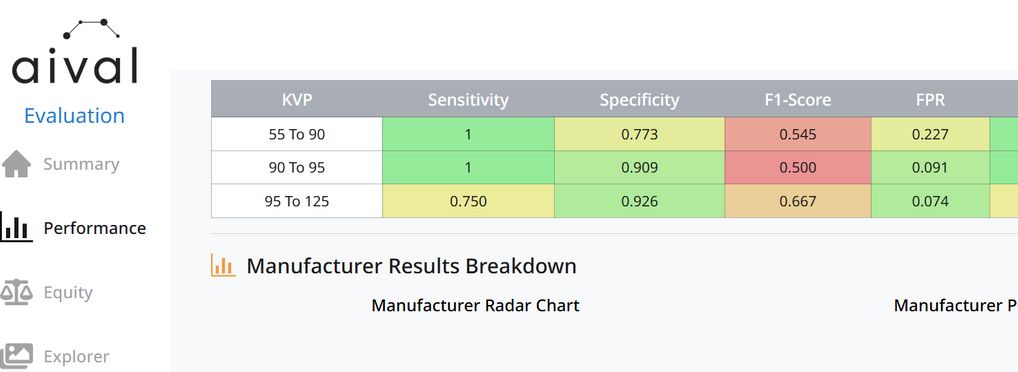

Clinicians and decision makers can evaluate and compare different AI products quickly, and choose the ones which offer greatest clinical benefit. The anaylsis creates reports on performance, fairness, robustness and explainability of the query product and highlights any inequities between population subgroups. Different AI products for the same application can be compared along the same baseline.

Monitor

Once an AI product is in use, Aival allows a hospital to audit and monitor the AI product to ensure performance and fairness is maintained over time. Safety alerts can be set to be warned for any potential drift in the AI results.

Curate

The software allows hospitals to collect and ground-truth data for evaluation from the local site, with user account management to control permissions.

About the tool

You can click on the links to see the associated tools

Developing organisation(s):

Tool type(s):

Objective(s):

Impacted stakeholders:

Target sector(s):

Country/Territory of origin:

Lifecycle stage(s):

Type of approach:

Usage rights:

License:

Target groups:

Target users:

Stakeholder group:

Validity:

Enforcement:

Geographical scope:

People involved:

Required skills:

Technology platforms:

Tags:

- fairness

- performance

- auditing

- healthcare

- validation

Use Cases

Would you like to submit a use case for this tool?

If you have used this tool, we would love to know more about your experience.

Add use case

Partnership on AI

Partnership on AI