These tools and metrics are designed to help AI actors develop and use trustworthy AI systems and applications that respect human rights and are fair, transparent, explainable, robust, secure and safe.

Citadel Lens

Citadel Lens enables organizations to test, monitor, and govern their AI systems (e.g. LLMs, vision, tabular).

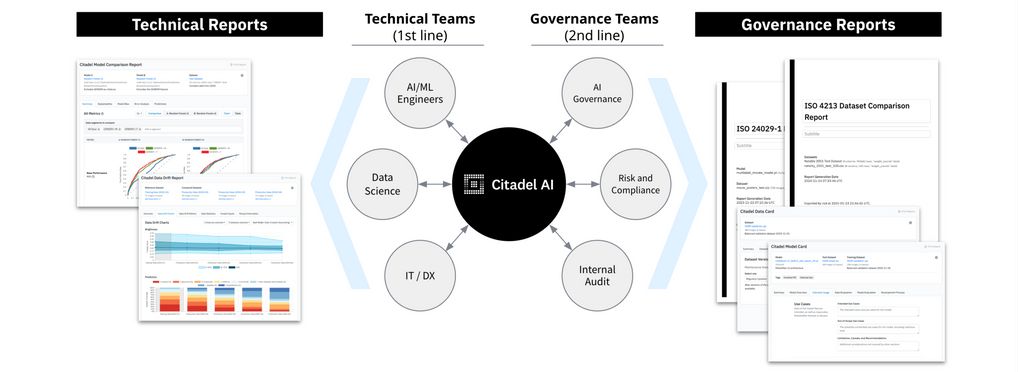

Citadel Lens bridges engineering and governance teams by automatically generating technical reports for engineering teams who build AI systems, as well as governance reports for governance, risk, and compliance teams based on international and regional standards.

Citadel Lens was developed by Citadel AI, a startup in Tokyo created by former engineers from Google Brain, Waymo, and Toyota with first-hand experience designing high-risk AI systems.

About the tool

You can click on the links to see the associated tools

Tool type(s):

Objective(s):

Impacted stakeholders:

Purpose(s):

Target sector(s):

Lifecycle stage(s):

Type of approach:

Maturity:

Usage rights:

Target groups:

Target users:

Stakeholder group:

Validity:

Enforcement:

Benefits:

Geographical scope:

People involved:

Required skills:

Technology platforms:

Tags:

- ai ethics

- ai responsible

- building trust with ai

- demonstrating trustworthy ai

- evaluation

- metrics

- model cards

- quality

- trustworthy ai

- validation of ai model

- ai assessment

- ai governance

- ai reliability

- ai auditing

Use Cases

Would you like to submit a use case for this tool?

If you have used this tool, we would love to know more about your experience.

Add use case

Partnership on AI

Partnership on AI