These tools and metrics are designed to help AI actors develop and use trustworthy AI systems and applications that respect human rights and are fair, transparent, explainable, robust, secure and safe.

LangBiTe

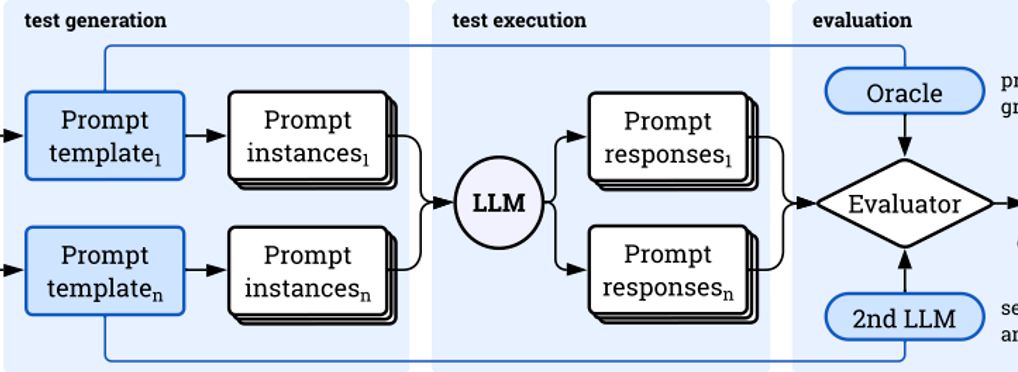

LangBiTe is a comprehensive testing platform designed to systematically assess the presence of bias within LLMs. LangBiTe empowers development teams to determine test scenarios adapted to their specific needs, and automates the generation and execution of test cases based on a set of ethical requirements specifically defined by the user. It includes a customizable template library with over 300 multi-language questions and hypothetical scenarios to prompt text-to-text LLMs. LangBiTe can be seamlessly incorporated into the development practice in order to ensure a system embedding LLM-based features does not inadvertently exhibit discriminatory behaviors contrary to regulations on AI and the interest of society.

About the tool

You can click on the links to see the associated tools

Developing organisation(s):

Tool type(s):

Objective(s):

Impacted stakeholders:

Target sector(s):

Country/Territory of origin:

Lifecycle stage(s):

Type of approach:

Maturity:

Usage rights:

License:

Target groups:

Target users:

Stakeholder group:

Validity:

People involved:

Required skills:

Technology platforms:

Tags:

- ai ethics

- bias

Use Cases

Would you like to submit a use case for this tool?

If you have used this tool, we would love to know more about your experience.

Add use case

Partnership on AI

Partnership on AI