The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

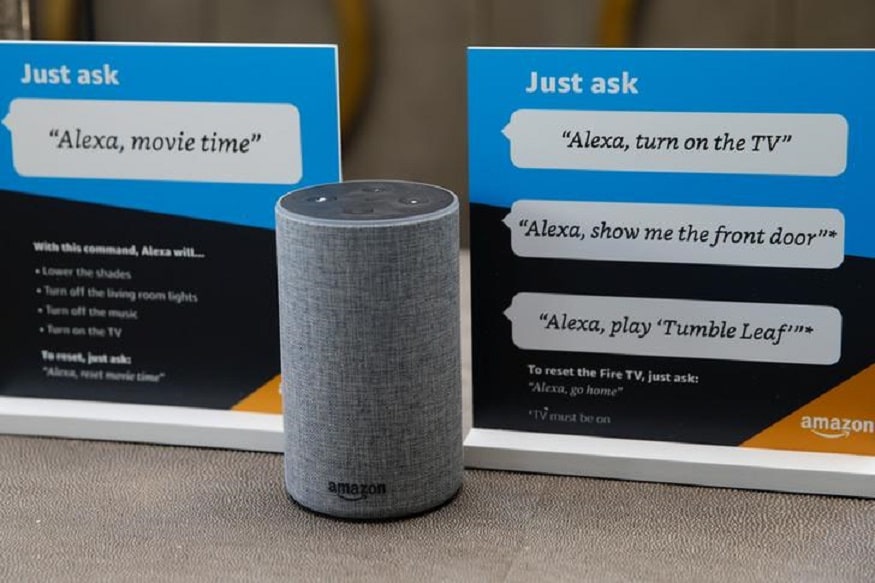

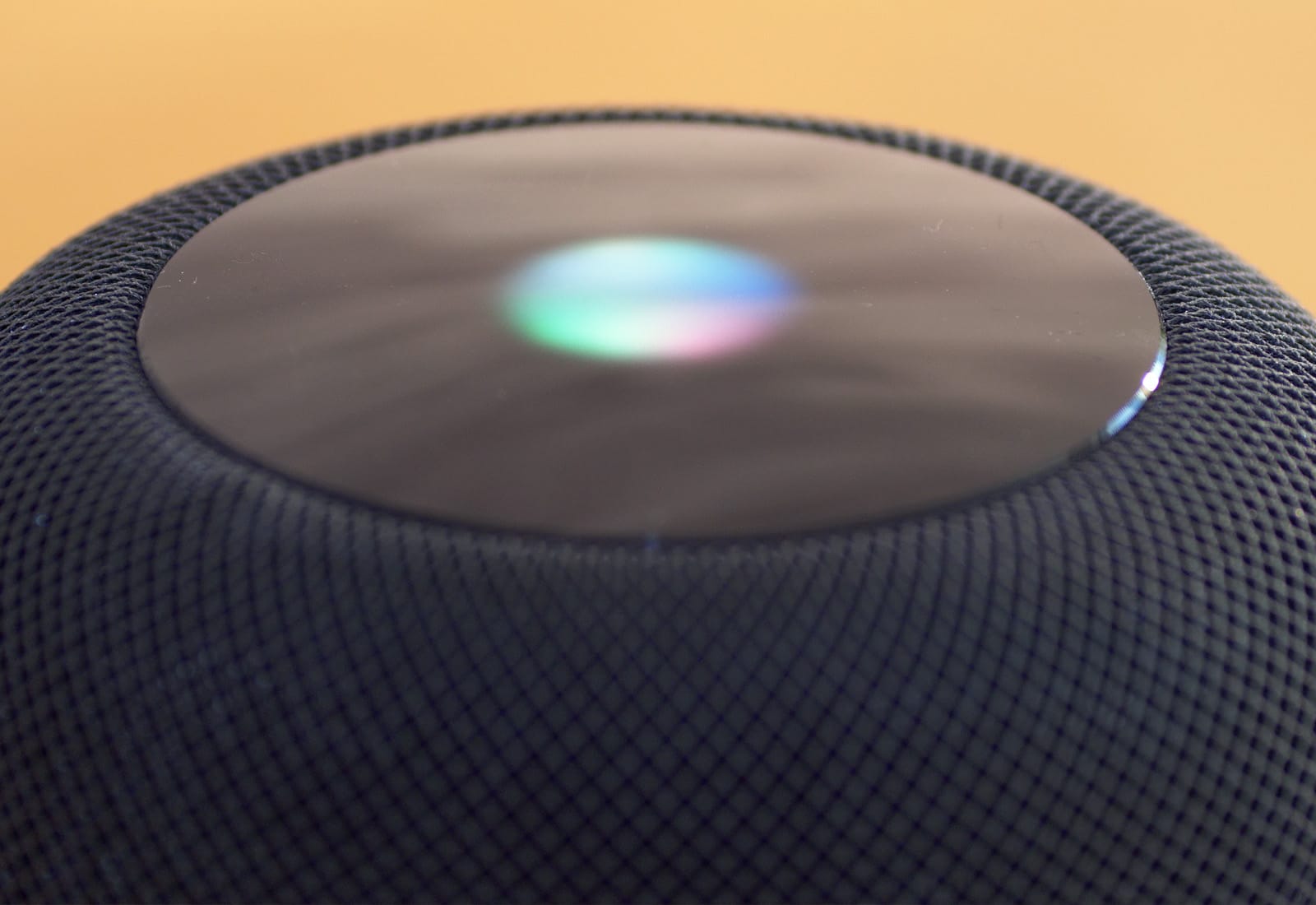

Researchers found over 1,000 words and phrases that inadvertently activate AI voice assistants like Alexa, Siri, Google Assistant, and Cortana, causing them to record and transmit private conversations without user consent. These recordings are sometimes reviewed by company employees, resulting in significant privacy breaches due to AI system malfunctions.[AI generated]