The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

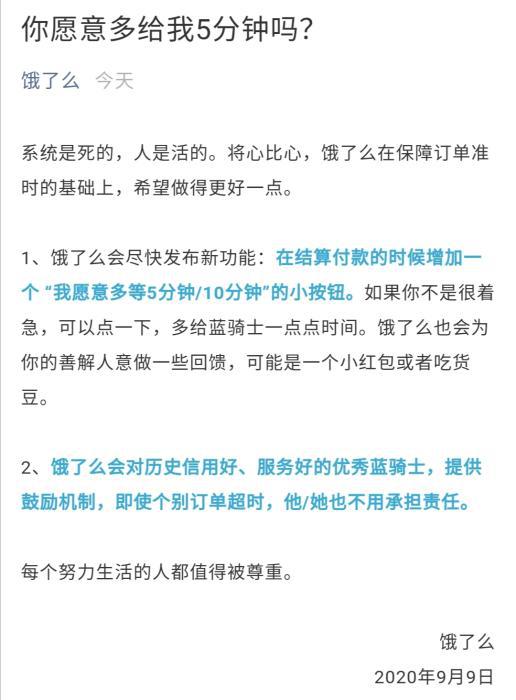

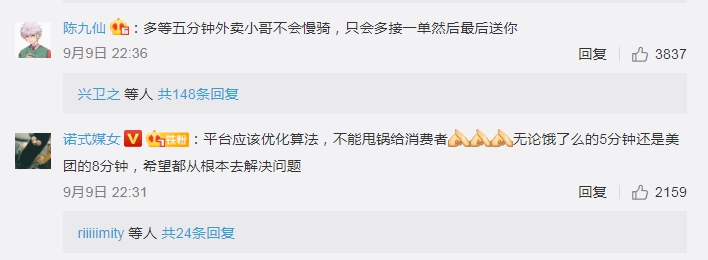

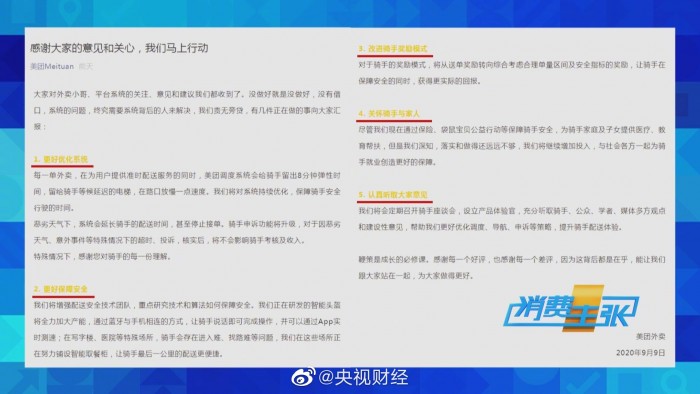

Chinese food delivery platforms Meituan and Ele.me use AI-driven algorithms to optimize delivery times, pressuring riders to speed and violate traffic rules. This has led to frequent accidents, injuries, and even deaths among riders. Public backlash prompted minor platform adjustments, but core algorithmic pressures remain, perpetuating unsafe working conditions.[AI generated]