The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

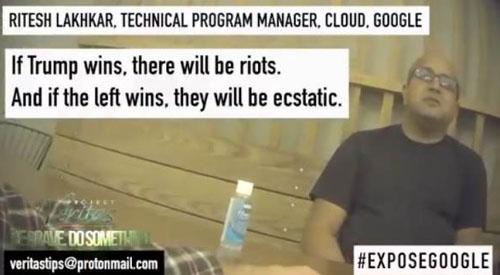

A Google Cloud program manager, Ritesh Lakhkar, was recorded by Project Veritas admitting that Google's search algorithms are intentionally skewed to favor Democratic candidates and harm Donald Trump. The manipulation of AI-driven search results is alleged to distort political information, potentially interfering with elections and violating rights to unbiased information.[AI generated]