The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

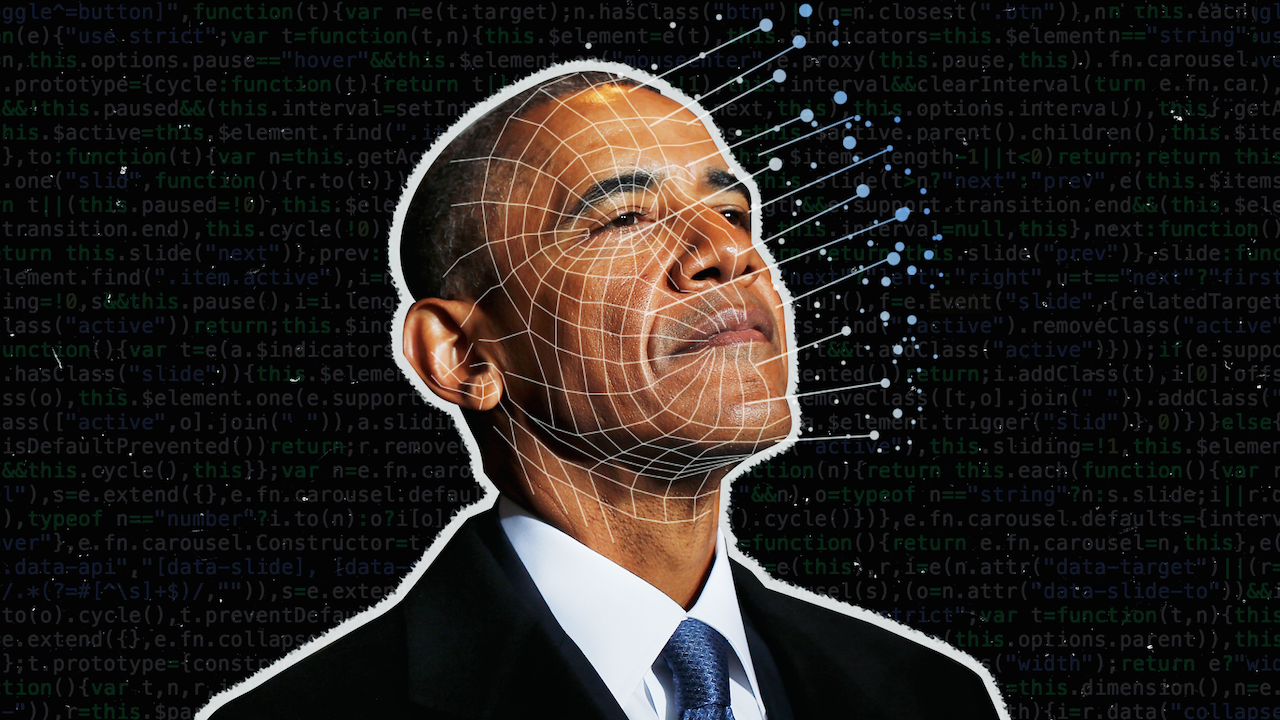

AI-powered deepfake technology has enabled the creation and spread of highly realistic fake videos and audio, leading to financial fraud, reputational damage, and widespread misinformation. Incidents include scams using synthetic voices and videos, such as a fake Mark Zuckerberg video, deceiving individuals and undermining public trust.[AI generated]