The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

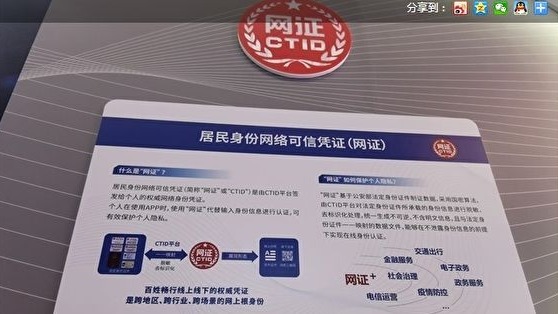

China's Ministry of Public Security has deployed an AI-driven 'network ID' system in Fujian and Guangdong, requiring biometric data for online authentication. Critics warn it enables mass surveillance, centralized sensitive data, and potential government control over internet access, raising significant privacy, security, and human rights concerns.[AI generated]

Why's our monitor labelling this an incident or hazard?

The event involves the use of an AI system (facial recognition and biometric verification) for online identity authentication. Although no direct harm has been reported yet, the system's deployment could plausibly lead to significant harms such as violations of privacy rights, suppression of freedom of expression, and social control, which fall under violations of human rights and harm to communities. Therefore, this qualifies as an AI Hazard due to the credible risk of future harm stemming from the AI system's use in surveillance and control.[AI generated]