The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

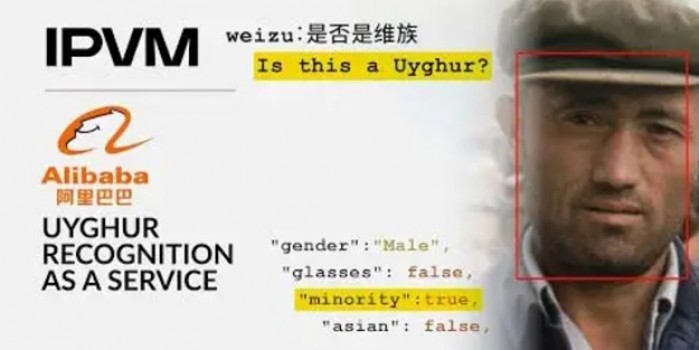

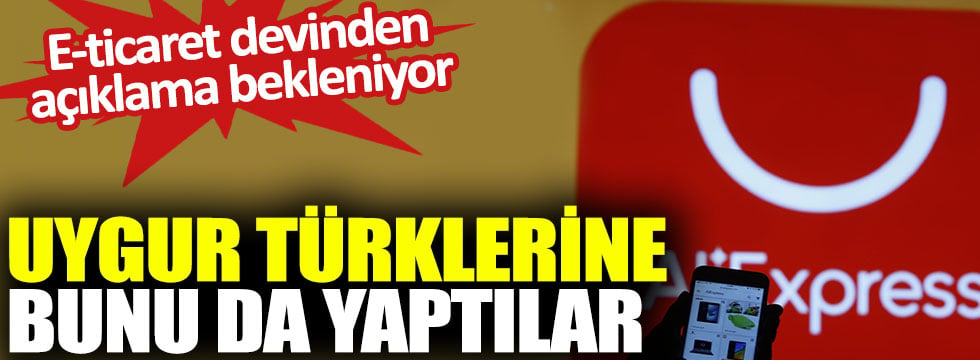

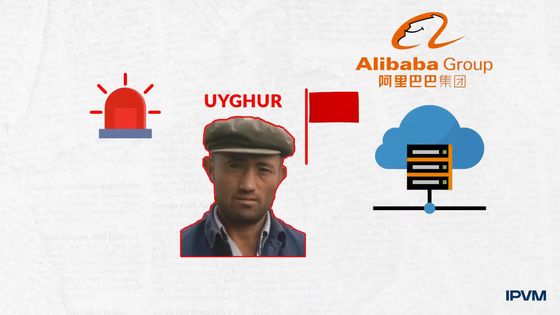

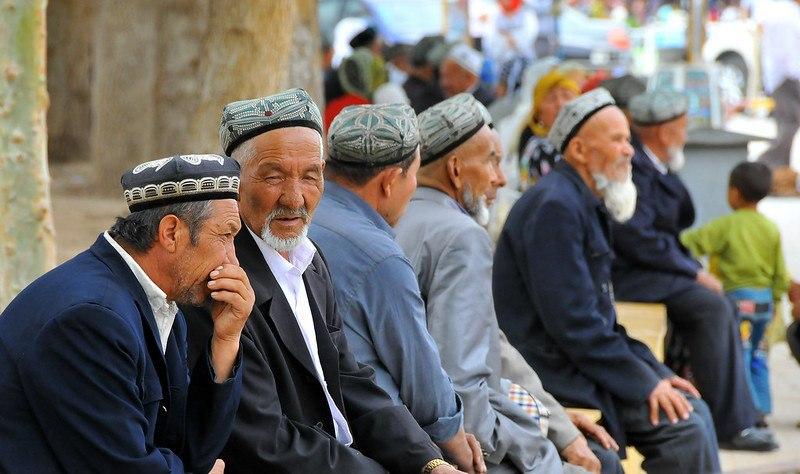

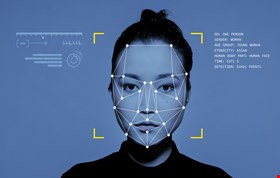

Alibaba Cloud developed and tested facial recognition software capable of identifying Uyghur individuals, raising concerns about ethnic profiling and human rights violations. Although Alibaba claims the feature was not deployed and has since removed it, the incident highlights the risks of AI-enabled surveillance targeting vulnerable minorities.[AI generated]

/cloudfront-us-east-1.images.arcpublishing.com/tgam/CRTKYOR7ZBB7ZJNGNN5PY62674.jpg)

/data/photo/2013/09/10/1540076china-flag-lawyers-007780x390.jpg)