The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

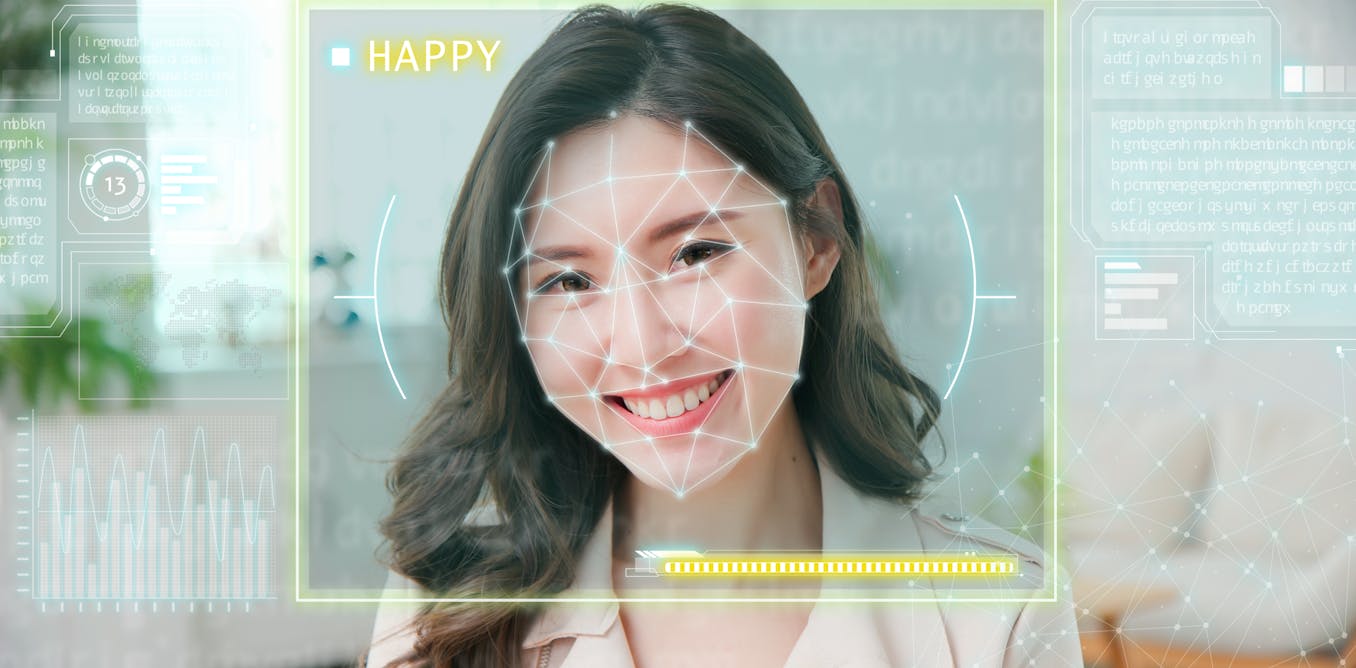

AI-powered emotion recognition technology is increasingly used in areas like hiring, security, and policing, often without consent. These systems are criticized for racial bias and privacy violations, leading to discriminatory outcomes and raising concerns about their scientific validity and potential harm to individuals and communities.[AI generated]

Why's our monitor labelling this an incident or hazard?

The article explicitly involves AI systems (emotion recognition technology) and discusses their use and inherent biases. It highlights potential harms such as racial discrimination, privacy violations, and misuse in policing and surveillance, which align with violations of human rights and harm to communities. However, it does not describe a concrete event where harm has already occurred but rather focuses on the risks and controversies surrounding the technology's deployment and scientific validity. Hence, it fits the definition of an AI Hazard, where the AI system's use could plausibly lead to an AI Incident in the future.[AI generated]