The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

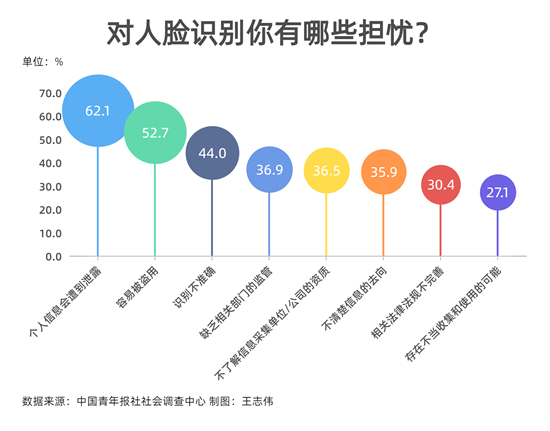

Multiple incidents in China and the US reveal that facial recognition AI systems have been misused by businesses and banks, resulting in unauthorized collection, sale, and use of biometric data. These practices have led to privacy violations, potential identity theft, and raised concerns about racial bias and discrimination, prompting regulatory responses.[AI generated]