The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

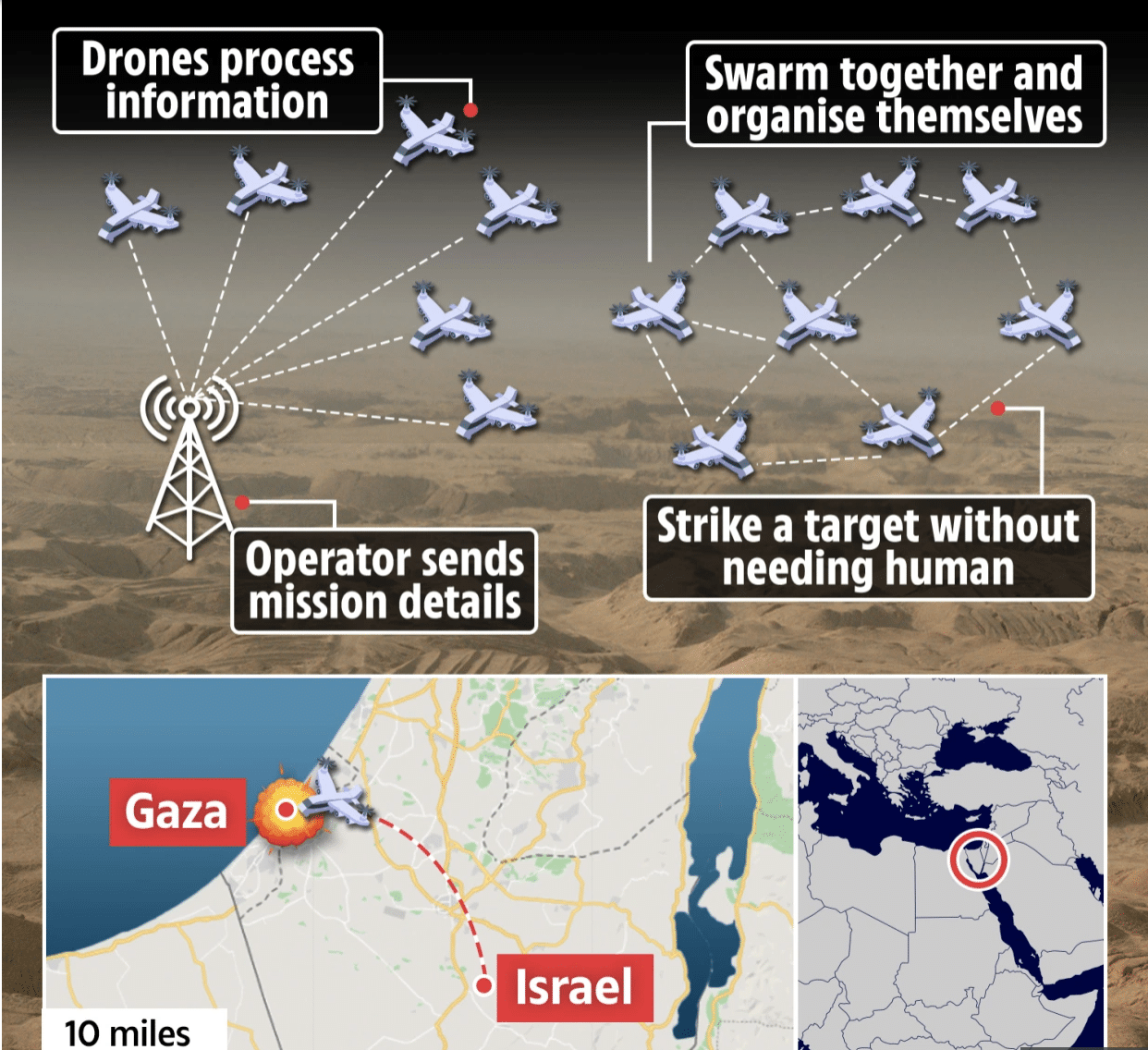

The Israeli Defense Forces (IDF) became the first military to deploy fully autonomous AI-controlled drone swarms in combat during the May 2021 Gaza conflict. These drones, operating without human intervention after mission launch, identified and attacked targets, resulting in numerous militant deaths and raising significant ethical concerns about autonomous lethal weapons.[AI generated]