The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

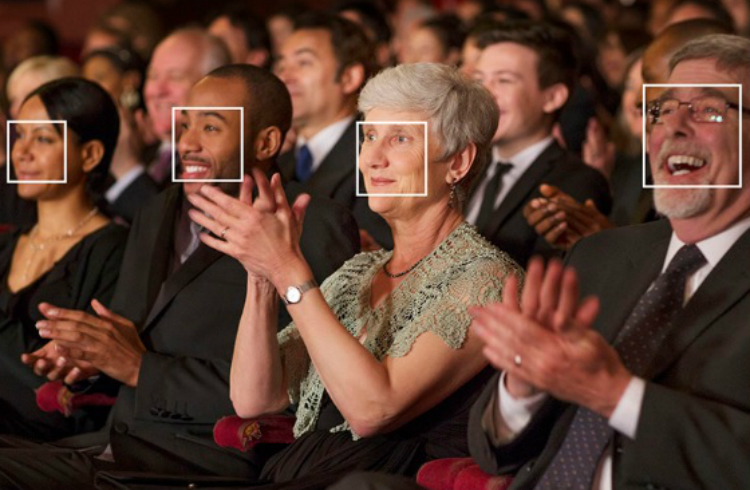

Spanish supermarket chain Mercadona was fined 2.5 million euros by the Spanish Data Protection Agency (AEPD) for deploying facial recognition AI in dozens of stores to identify individuals with restraining orders. The system violated data protection laws, leading to its removal and the termination of the pilot project.[AI generated]