The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

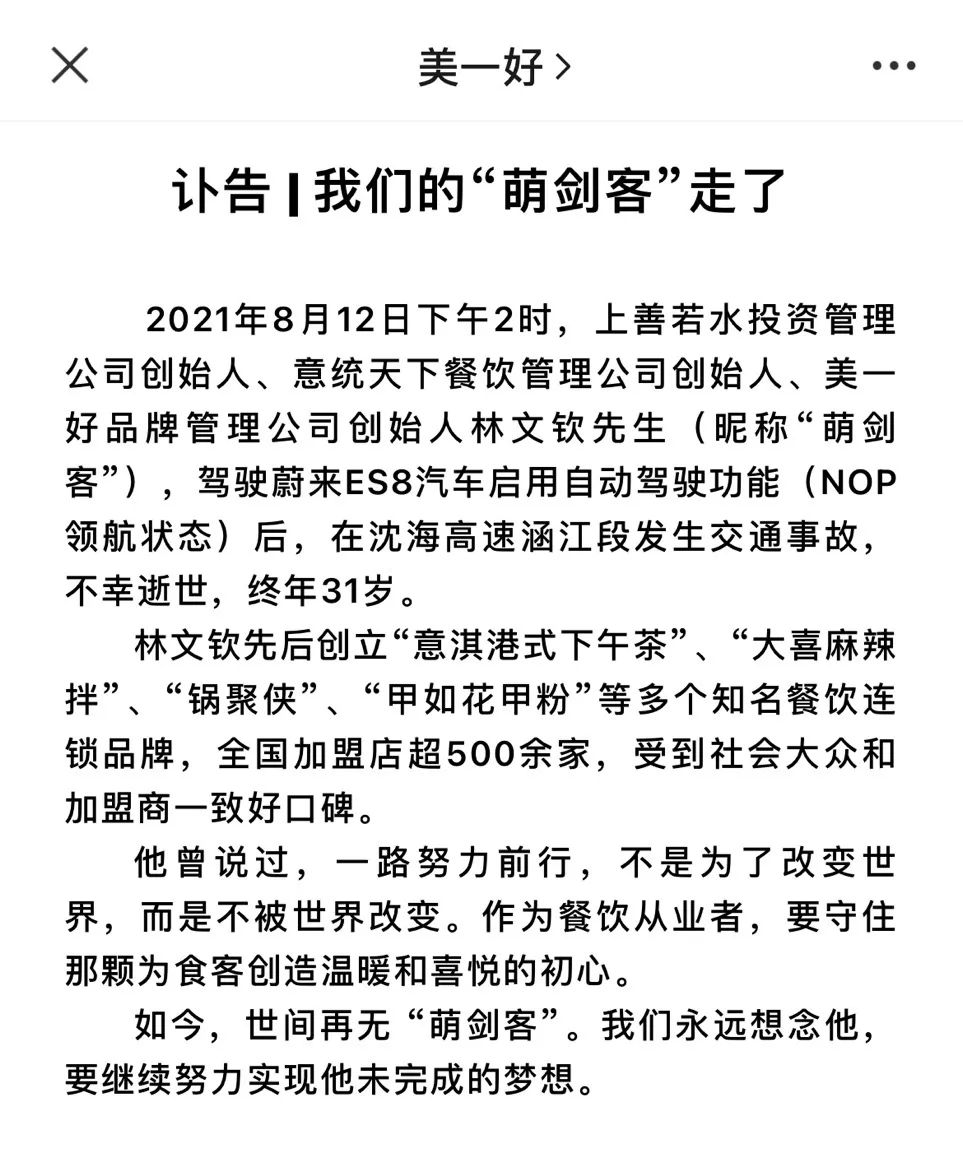

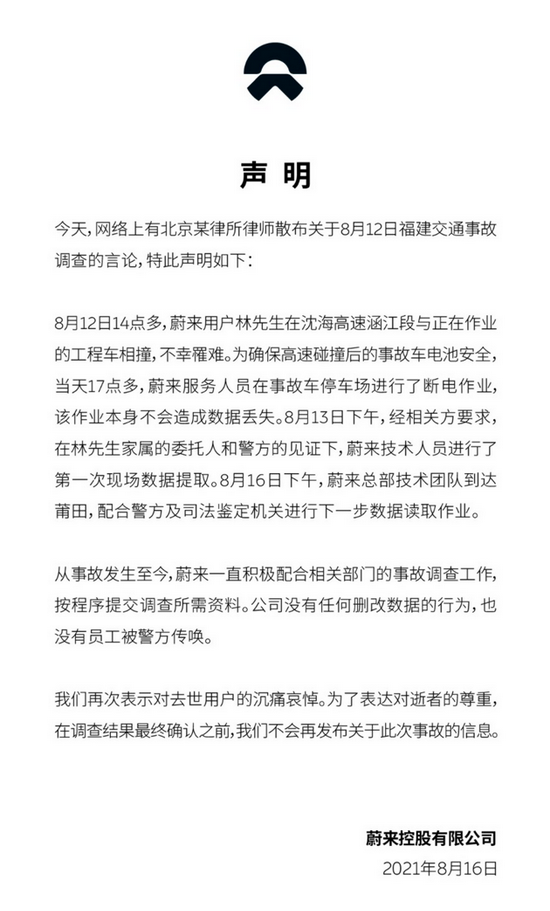

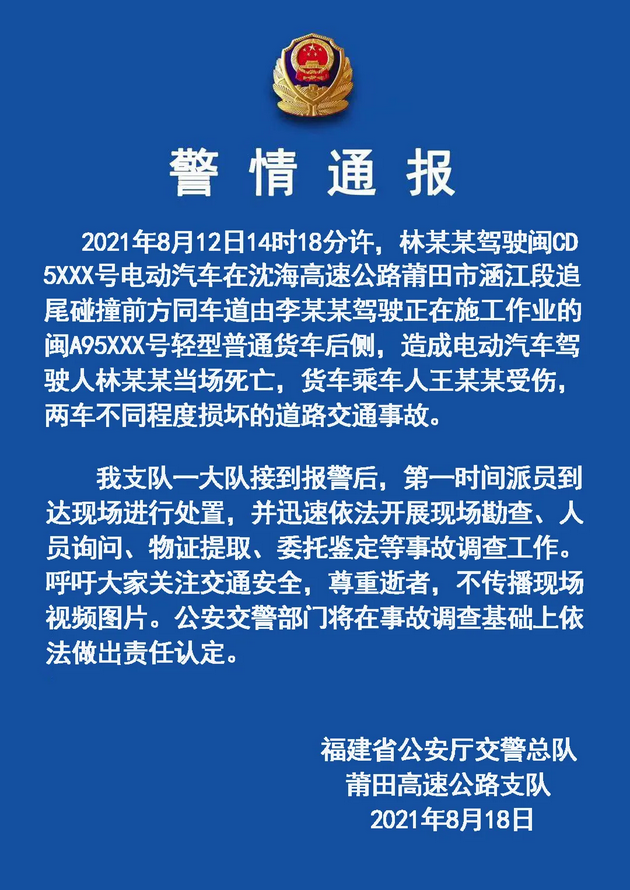

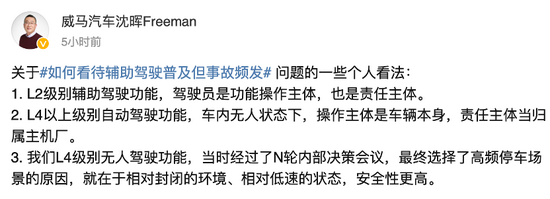

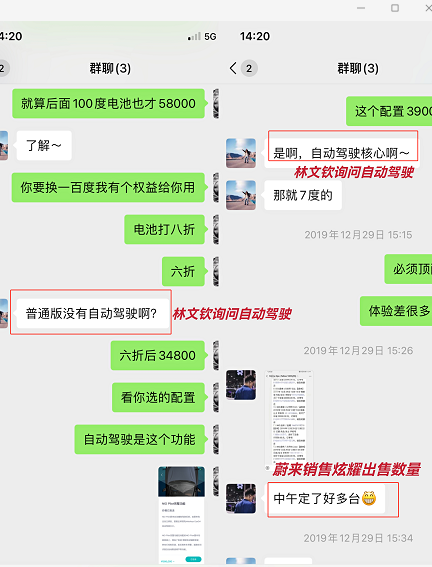

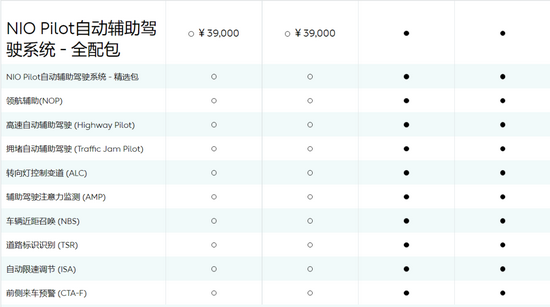

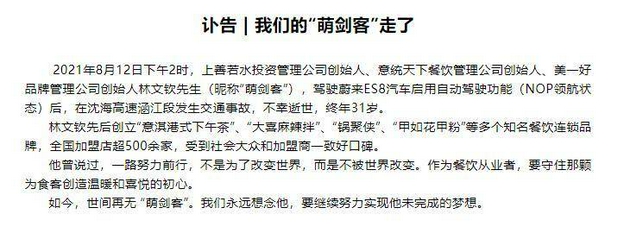

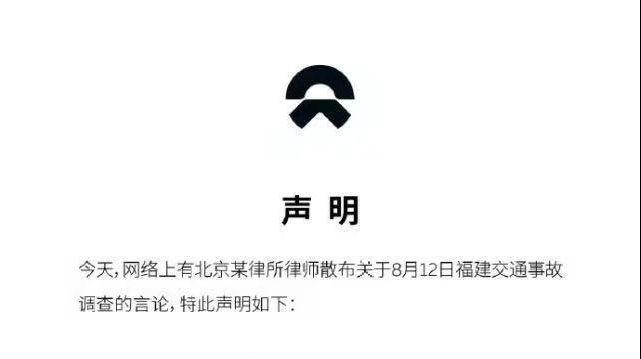

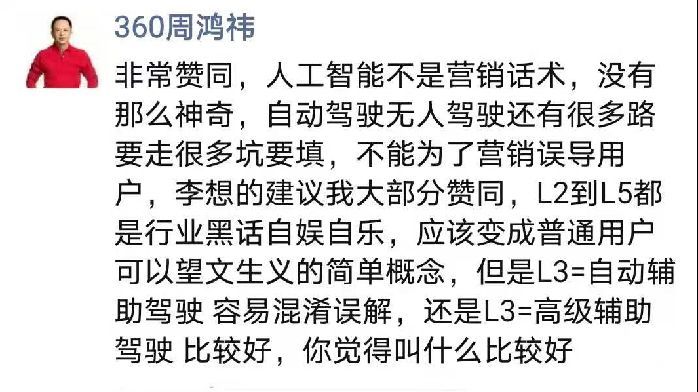

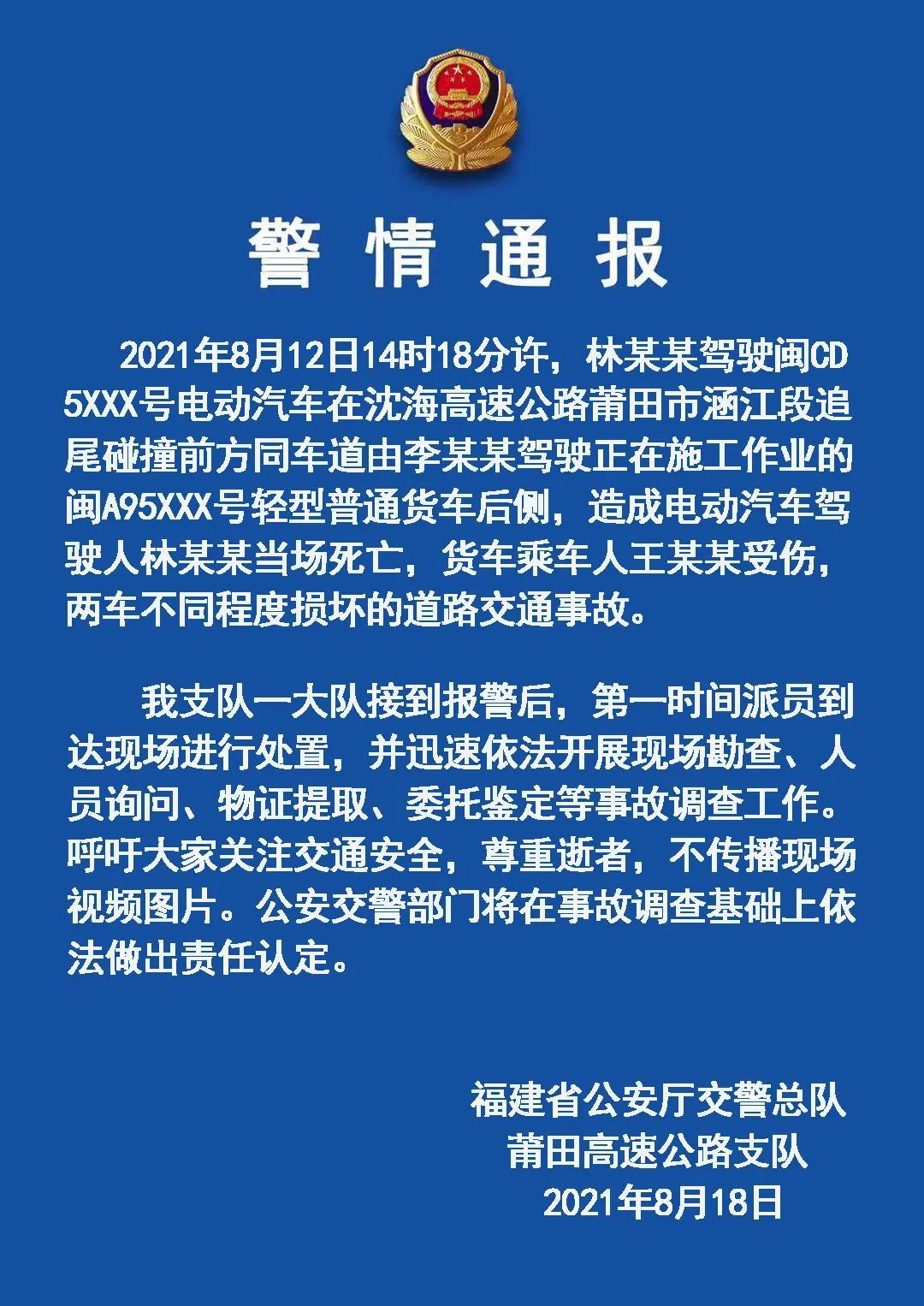

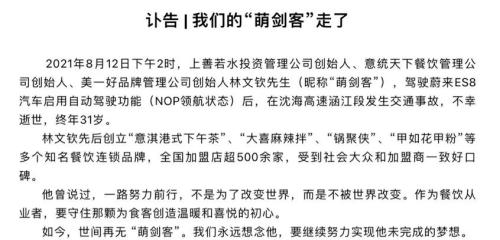

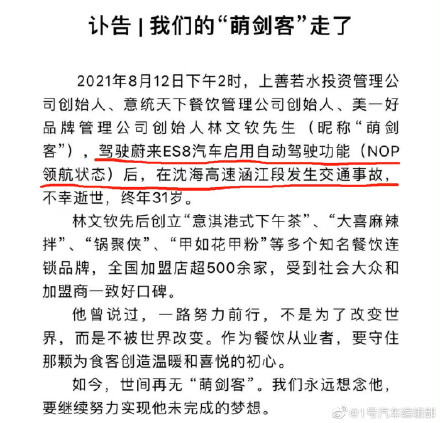

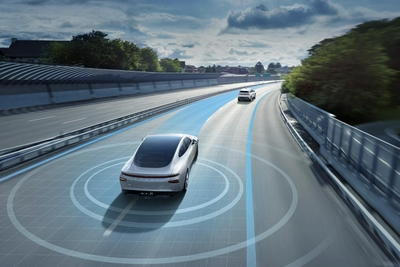

Chinese entrepreneur Lin Wenqin died in a traffic accident while driving a NIO ES8 with the Navigate on Pilot (NOP) assisted driving system active on a Fujian highway. The incident has raised concerns about the safety of AI-assisted driving features, with investigations ongoing and NIO stating the system is not fully autonomous.[AI generated]