The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

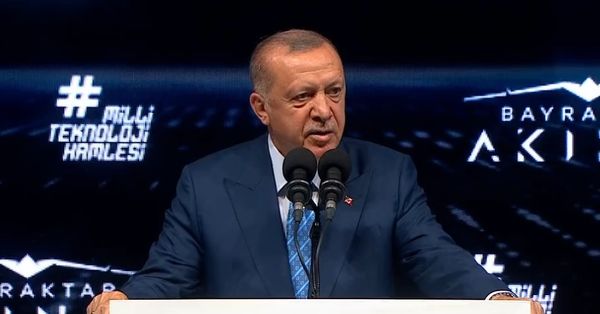

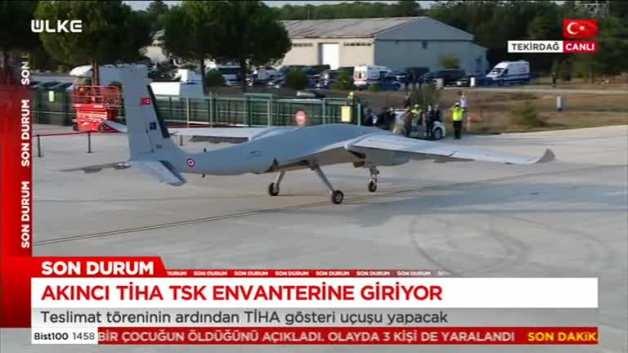

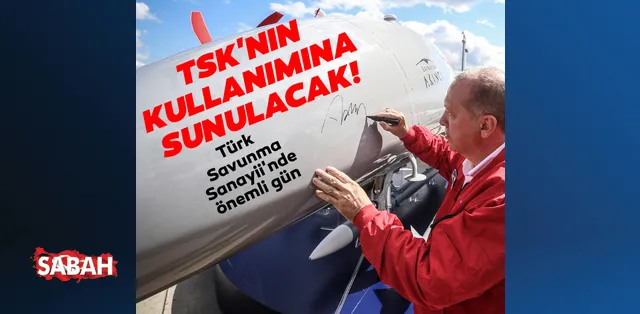

Turkey has delivered the AI-enabled Akıncı Taarruzi İnsansız Hava Aracı (TİHA), an advanced armed drone developed by Baykar, to its armed forces. The drone's autonomous capabilities and military deployment raise concerns about potential future harm in conflict zones, highlighting the risks associated with AI-powered weapon systems.[AI generated]