The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

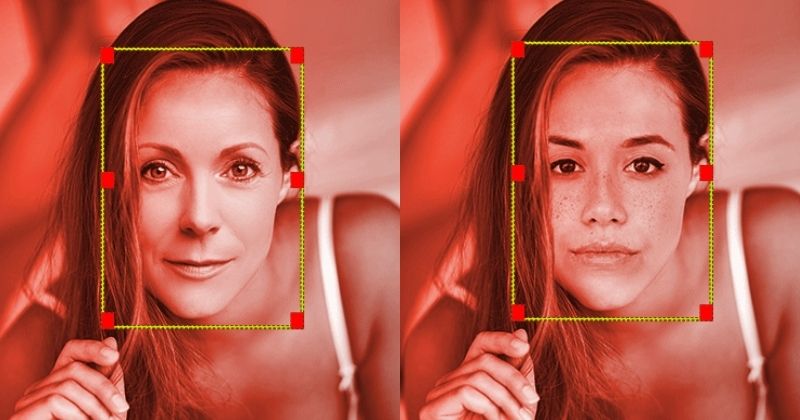

A new AI-powered deepfake app allowed users to upload photos and generate highly realistic, nonconsensual pornographic videos, primarily targeting women. The app, discovered by researchers and reported by MIT Technology Review, caused significant psychological and reputational harm before being taken offline following public backlash. Similar unethical AI tools remain online.[AI generated]