The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

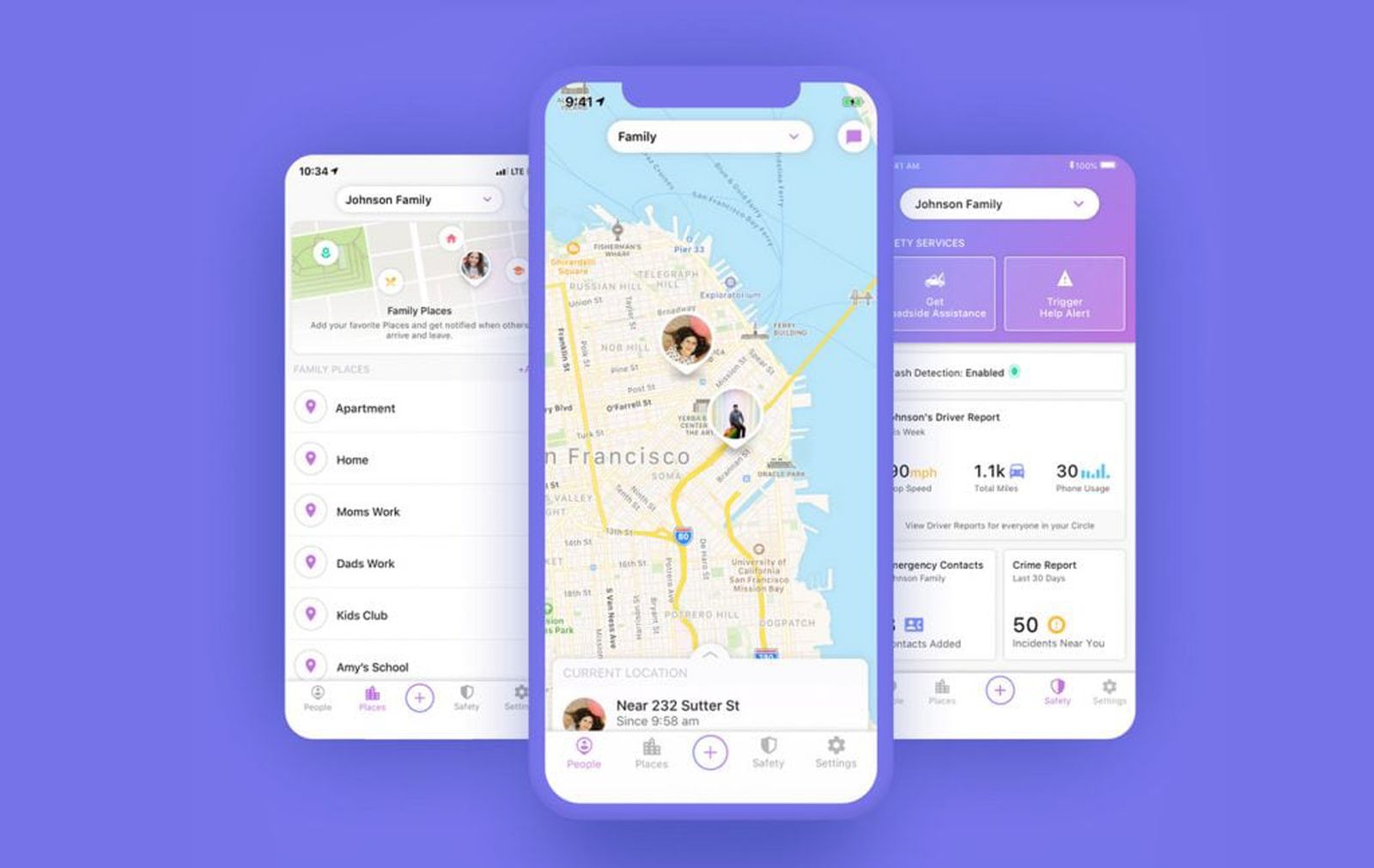

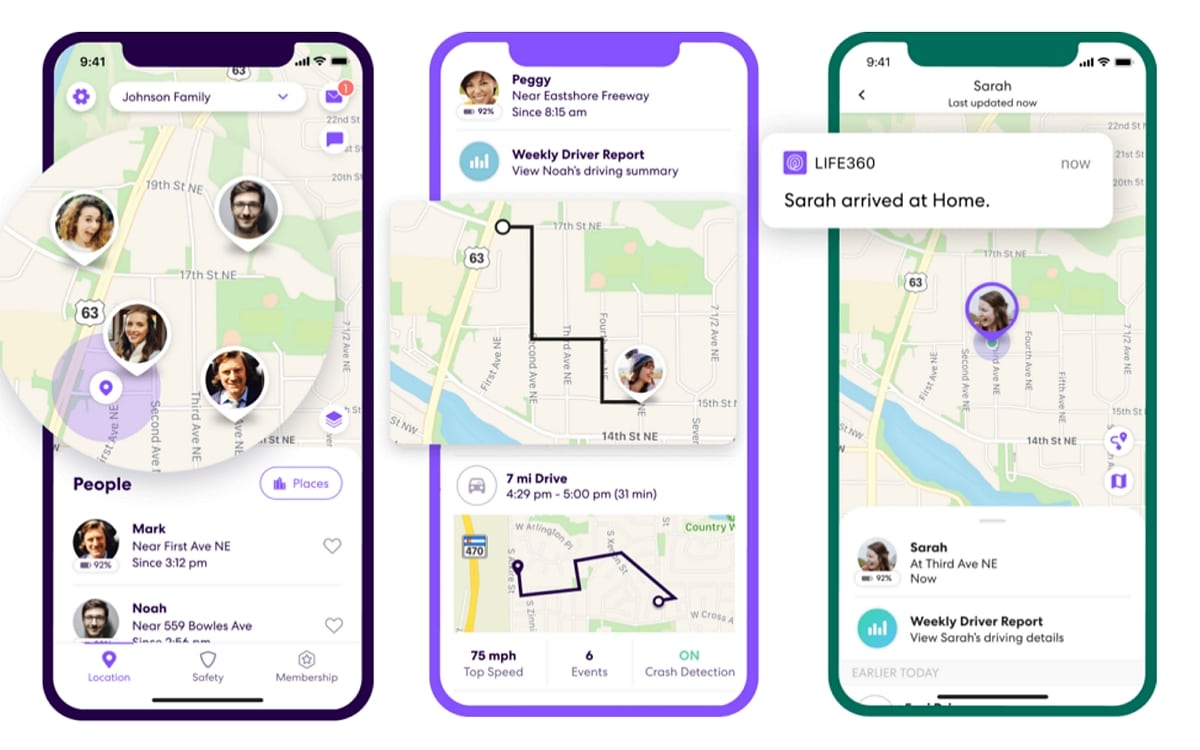

Life360, a widely used family safety app, has been selling precise location data of millions of users, including children, to multiple data brokers. This AI-driven data collection and sale has led to significant privacy violations, exposing users to potential misuse of their sensitive information. The issue is heightened by Life360's acquisition of Tile.[AI generated]