The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

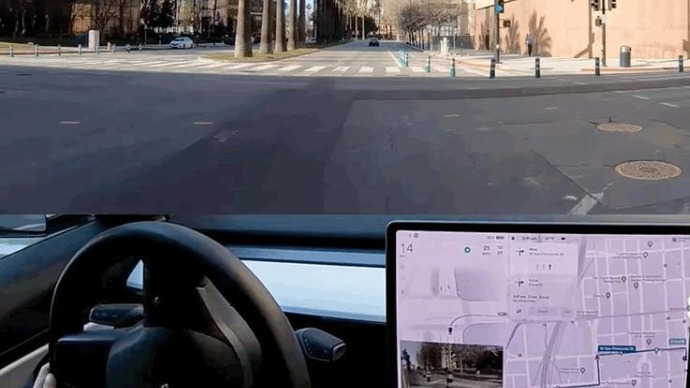

Tesla's AI-driven Autopilot and FSD systems have faced increased scrutiny after a surge in 'phantom braking' complaints and the first recorded FSD Beta crashes, where vehicles struck roadside barriers. U.S. senators and regulators are investigating, citing safety risks and harm linked to Tesla's vision-only AI approach and system malfunctions.[AI generated]