The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

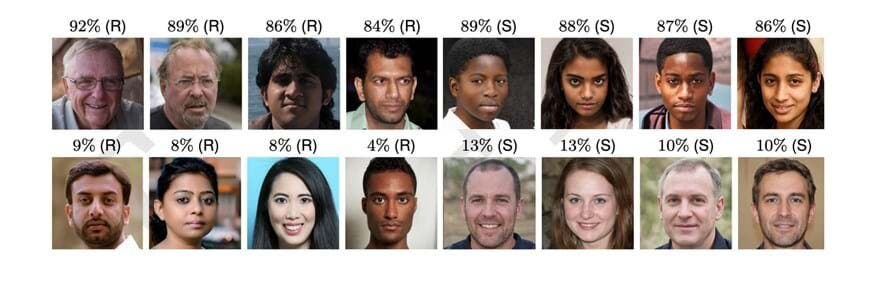

Multiple studies led by Hany Farid at UC Berkeley show that AI-generated faces, created using GANs like NVIDIA's StyleGAN2, are now indistinguishable from real faces and are often rated as more trustworthy. This raises significant risks for fraud, deception, and erosion of public trust, with documented cases of AI-generated voices already enabling financial scams.[AI generated]