The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

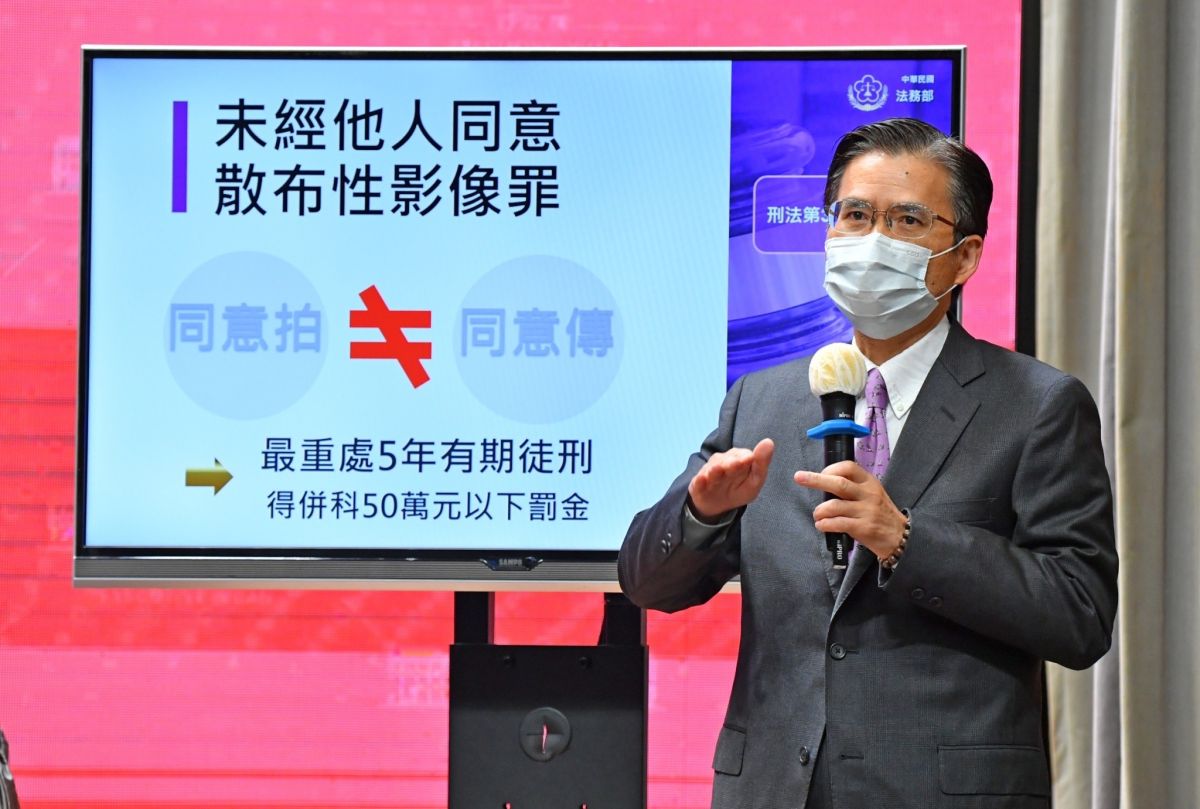

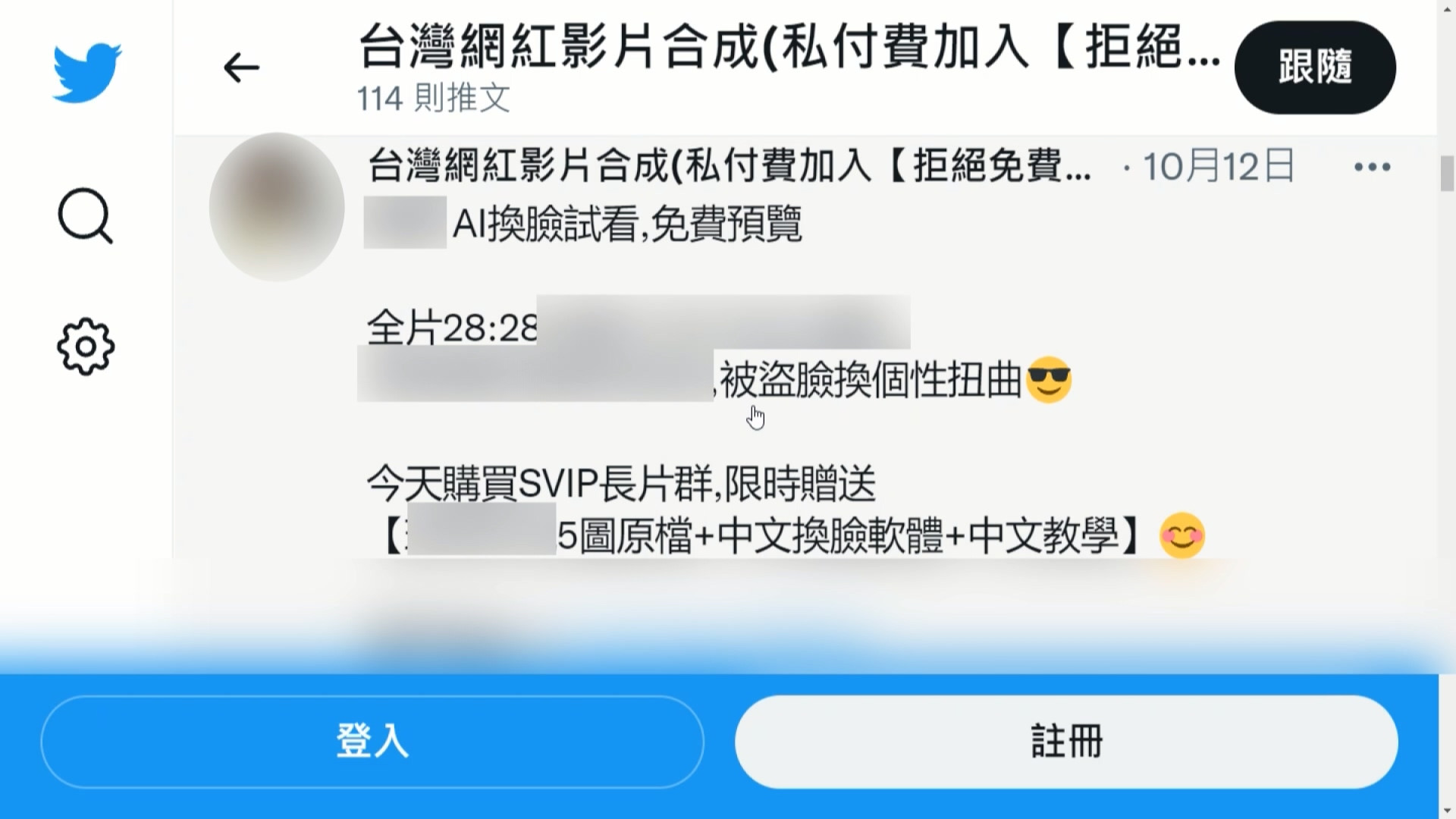

In response to rising cases of AI-generated deepfake sexual images and videos causing harm, Taiwan's Executive Yuan approved amendments to four laws. The new measures criminalize the creation and distribution of non-consensual deepfake sexual content, increase penalties, and require internet platforms to promptly remove illegal material, aiming to better protect victims.[AI generated]