The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

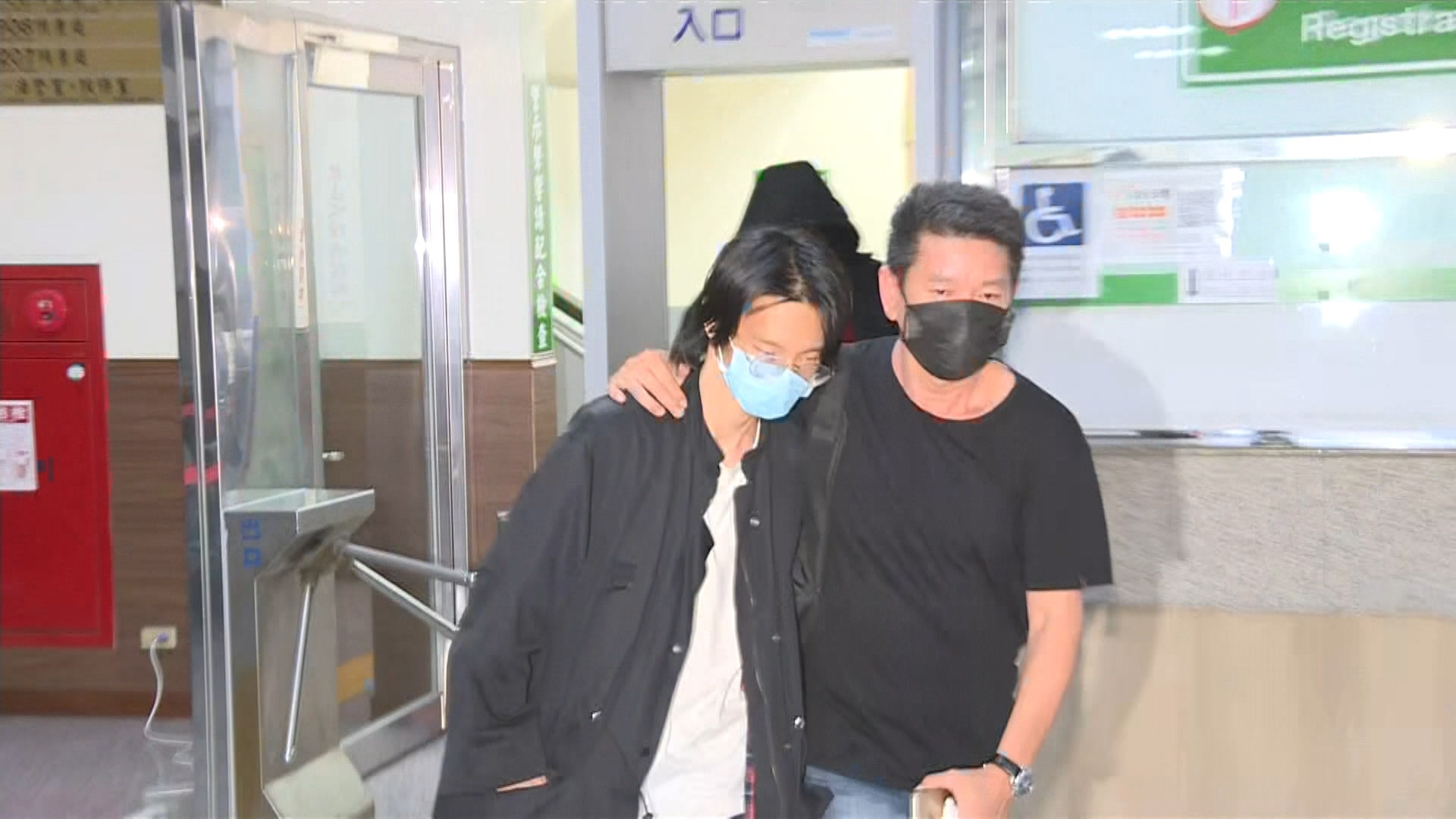

YouTuber 'Xiao Yu' and an accomplice used deepfake AI technology to create and sell non-consensual explicit videos featuring the faces of 119 public figures, causing severe and lasting harm. The pair profited over NT$13 million before being prosecuted for privacy violations, defamation, and sexual exploitation, prompting calls for stricter AI-related laws.[AI generated]

Why's our monitor labelling this an incident or hazard?

The article references the use of AI technology for creating deepfake videos, which implies the involvement of an AI system. The creation and use of deepfake content for profit can be considered a violation of rights, particularly intellectual property rights and potentially privacy rights, which aligns with harm category (c). Since the deepfake videos were allegedly produced and used, this constitutes realized harm. Therefore, this event qualifies as an AI Incident due to the direct involvement of AI in producing harmful content and the resulting controversy and harm.[AI generated]