The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

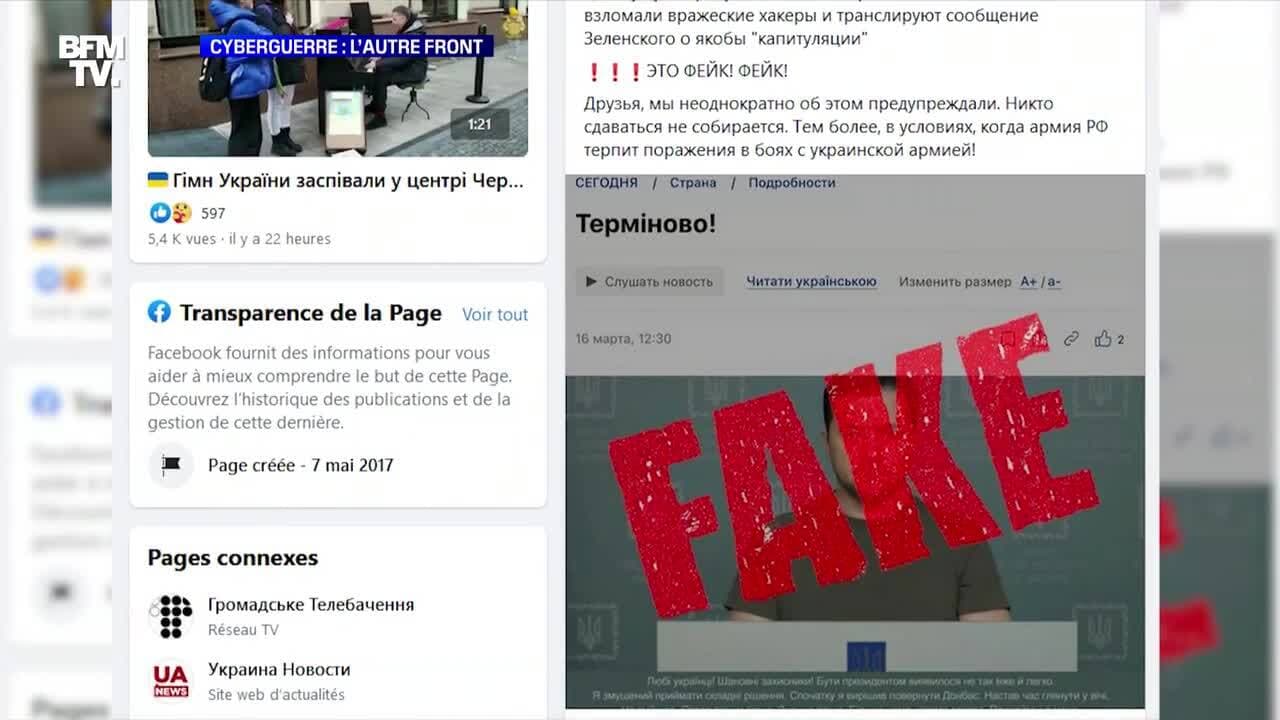

Hackers used AI-generated deepfake technology to create and broadcast a fake video of Ukrainian President Zelensky urging surrender, which was aired on a Ukrainian news channel and spread widely online. The incident caused panic and misinformation before being debunked and removed, highlighting the dangers of AI-driven disinformation in conflict.[AI generated]