The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

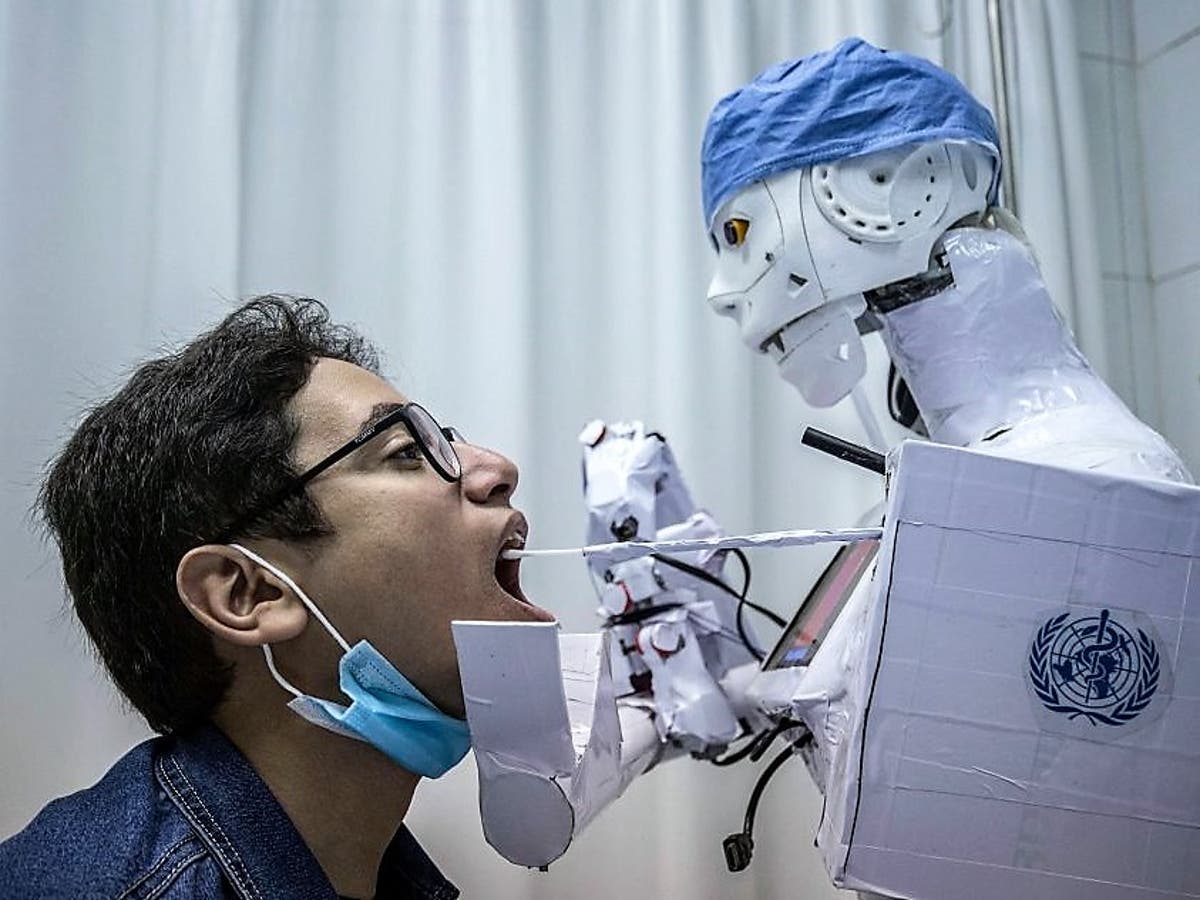

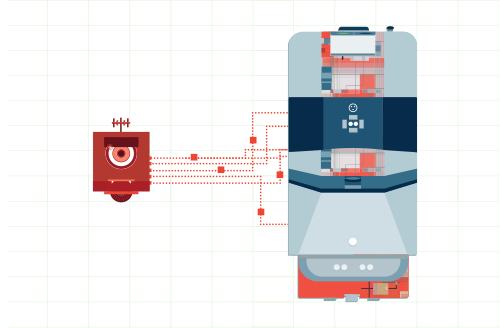

Researchers discovered five critical zero-day vulnerabilities in Aethon TUG autonomous hospital robots, allowing potential attackers to disrupt medication delivery, interfere with hospital operations, and access sensitive patient data. The flaws, which posed significant risks to health and privacy, were patched after coordinated disclosure and remediation efforts.[AI generated]