The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

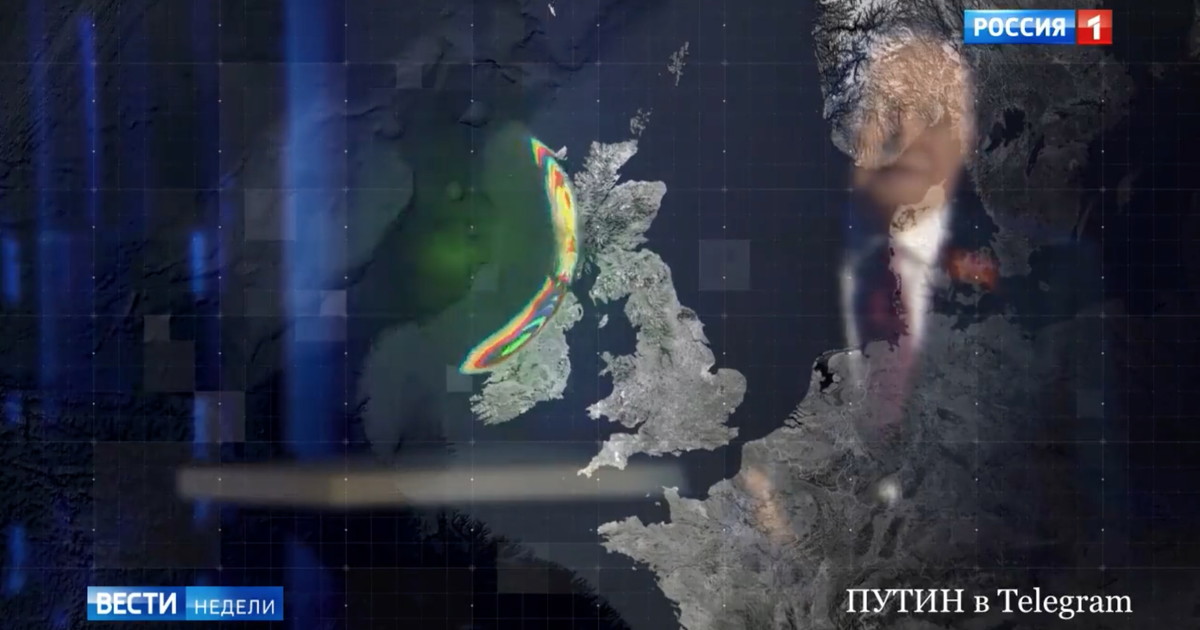

Russian state TV, via anchor Dmitry Kiselyov, threatened the UK with the Poseidon autonomous underwater drone, capable of carrying nuclear warheads and creating a radioactive tsunami. The broadcasts highlighted the AI-driven weapon's destructive potential, raising concerns about the catastrophic risks posed by AI-enabled military systems, though no attack has occurred.[AI generated]

Why's our monitor labelling this an incident or hazard?

The Poseidon drone is an AI system as it is an autonomous underwater vehicle capable of high-speed navigation and delivery of a nuclear payload. The threat of its use implies a plausible risk of catastrophic harm to people, communities, and the environment (radioactive contamination and destruction). Since no actual harm has occurred yet and the article only reports on the threat, this is a potential harm scenario. Hence, it fits the definition of an AI Hazard, as the development and potential use of this AI-enabled weapon system could plausibly lead to an AI Incident involving mass destruction and radioactive harm.[AI generated]