The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

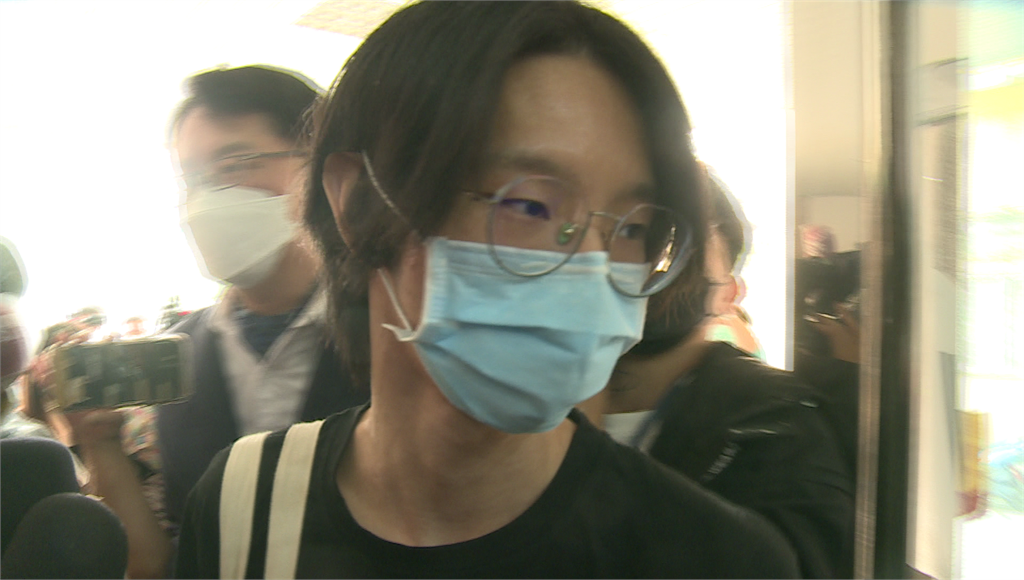

YouTuber Zhu Yuchen (Xiao Yu) and an accomplice used AI deepfake software to create and sell non-consensual pornographic videos featuring the faces of 119 public figures, causing severe psychological harm and privacy violations. The case led to criminal charges, civil lawsuits, and calls for stricter digital crime laws in Taiwan.[AI generated]