The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

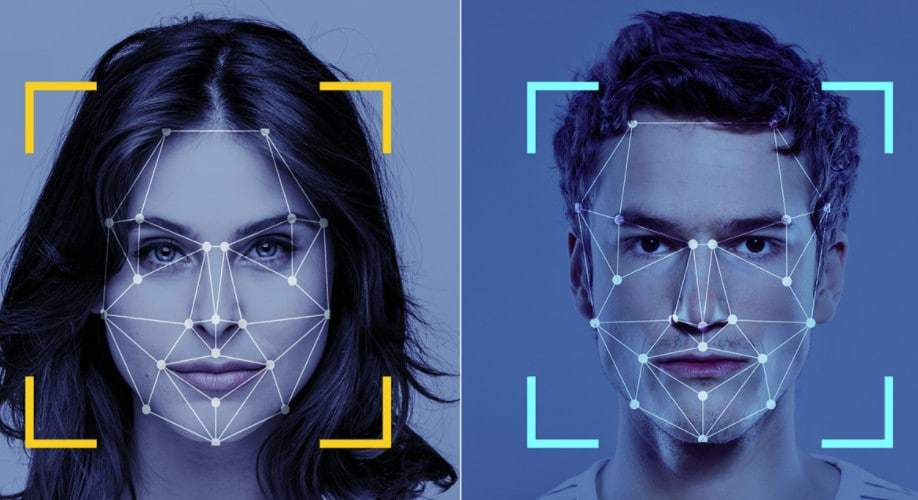

Multiple articles highlight growing concerns over AI-powered biometric technologies, such as facial recognition and DNA analysis, which pose risks of privacy violations, discrimination, and digital authoritarianism. The debate centers on balancing beneficial uses with the dangers of misuse, especially in surveillance-heavy states like China, prompting calls for stronger regulation.[AI generated]

Why's our monitor labelling this an incident or hazard?

The article explicitly references AI-enabled biometric technologies used for surveillance and facial recognition, which are AI systems by definition. It discusses the potential for these technologies to lead to harms such as violations of privacy, human rights abuses, and digital authoritarianism, particularly in the context of China's surveillance practices. Although no concrete harm event is described, the credible risk of significant future harm is emphasized, including calls for moratoriums and stronger regulation. This fits the definition of an AI Hazard, as the development and use of these AI systems could plausibly lead to AI Incidents involving human rights violations and harm to communities. The article is not reporting a realized harm but warns about plausible future harms and governance responses, so it is not an AI Incident or Complementary Information. It is not unrelated because it clearly involves AI systems and their societal risks.[AI generated]