The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

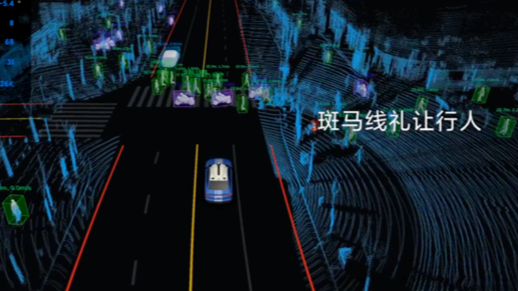

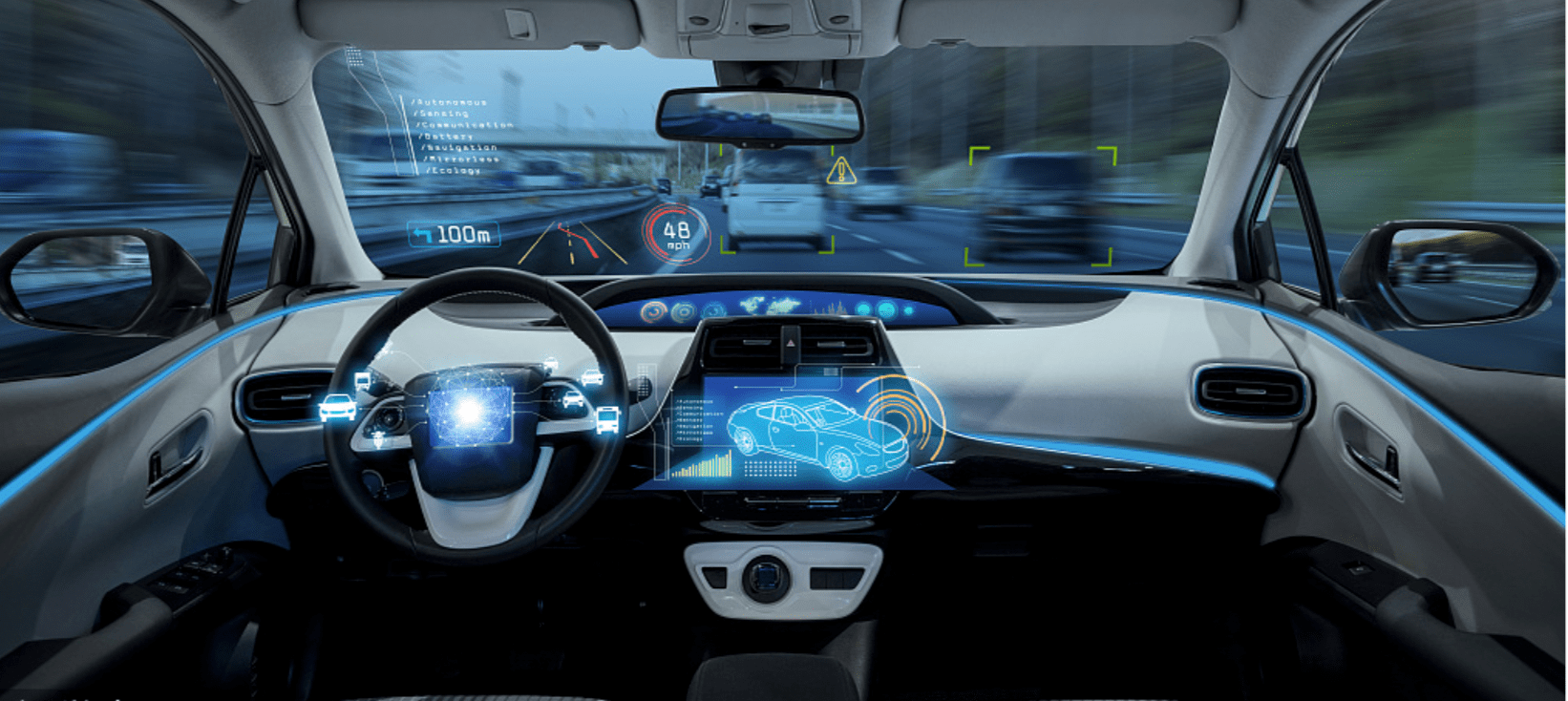

An XPeng vehicle in Ningbo, China, crashed while its lane centering assisted driving system was active and failed to detect a stalled vehicle ahead, resulting in injuries and fatalities. XPeng is cooperating with authorities in the investigation, highlighting risks associated with AI-assisted driving systems.[AI generated]