The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

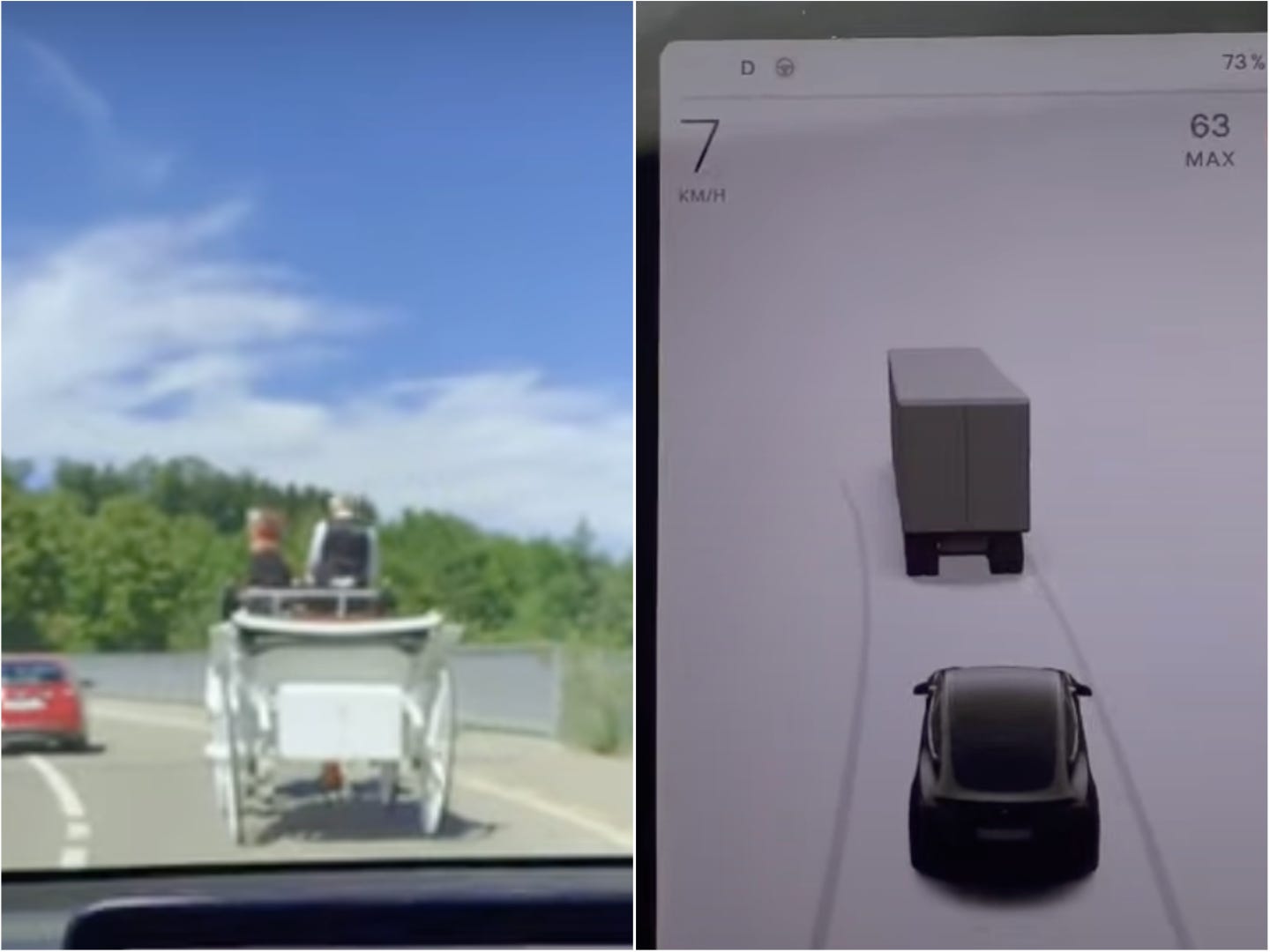

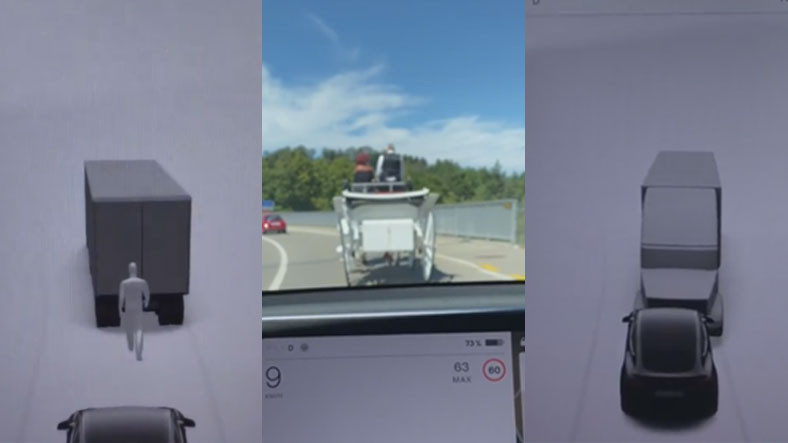

Tesla's Full Self Driving (FSD) AI system failed to correctly identify a horse-drawn carriage during a beta test, repeatedly misclassifying it as a truck or van. The incident, widely shared on social media, highlights ongoing object recognition issues in Tesla's AI, though no harm or accident occurred.[AI generated]