The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

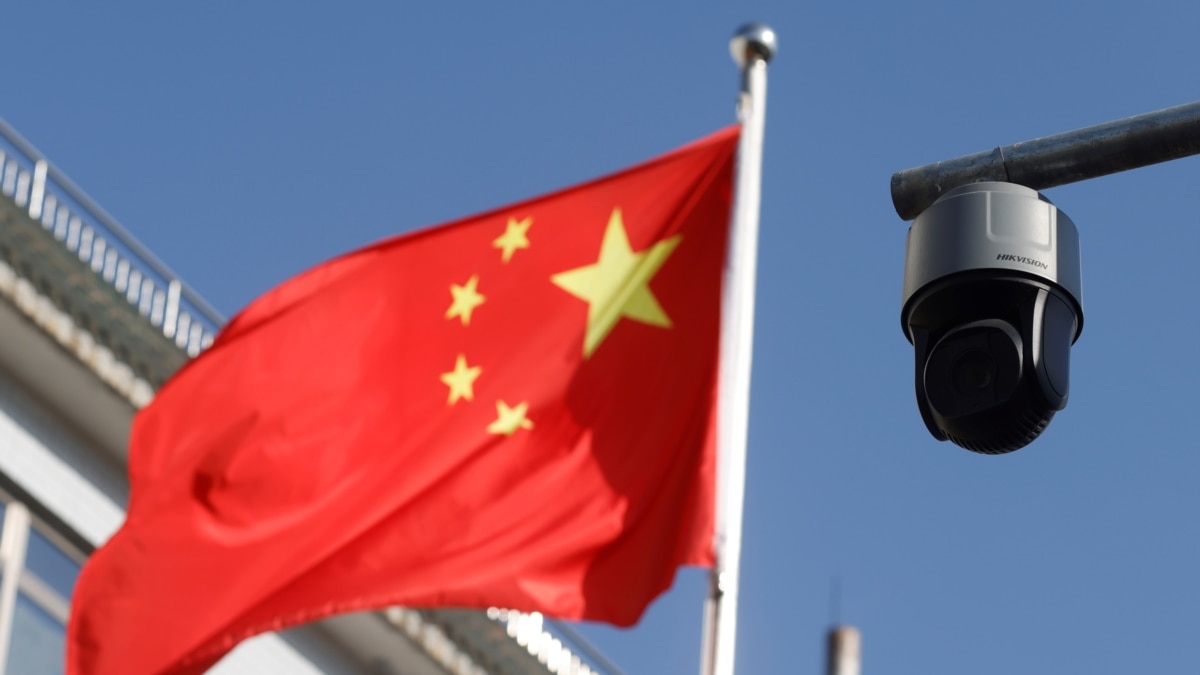

China has deployed over 500 million AI-enabled surveillance cameras, using facial recognition and predictive analytics to monitor and suppress its population, including minorities and dissidents. This mass surveillance, described as creating an 'AI totalitarian state,' has resulted in widespread violations of human rights and fundamental freedoms.[AI generated]