The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

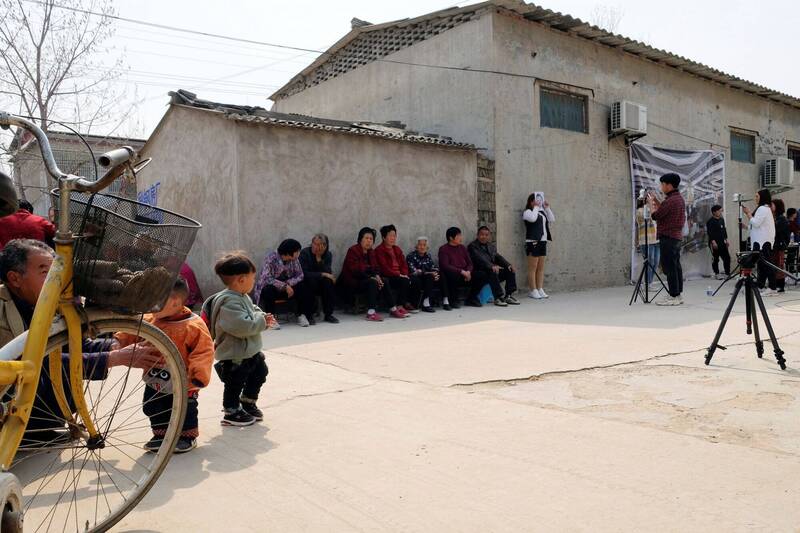

Chinese authorities collected facial data from rural villagers in Henan, offering small gifts like cooking oil, to train AI facial recognition systems. These systems enable mass surveillance and are used by police to monitor, classify, and discriminate against ethnic minorities, raising serious human rights concerns over privacy and ethnic profiling.[AI generated]

Why's our monitor labelling this an incident or hazard?

The event involves the use of AI systems (facial recognition algorithms) developed and deployed for mass surveillance by the Chinese government. The collection of facial data to train these AI systems and their deployment for identifying, classifying, and tracking individuals constitutes a direct use of AI leading to violations of human rights and fundamental freedoms. The article details realized harms such as surveillance, classification, and tracking of citizens, including vulnerable groups, which fits the definition of an AI Incident under violations of human rights and breach of legal protections.[AI generated]