The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

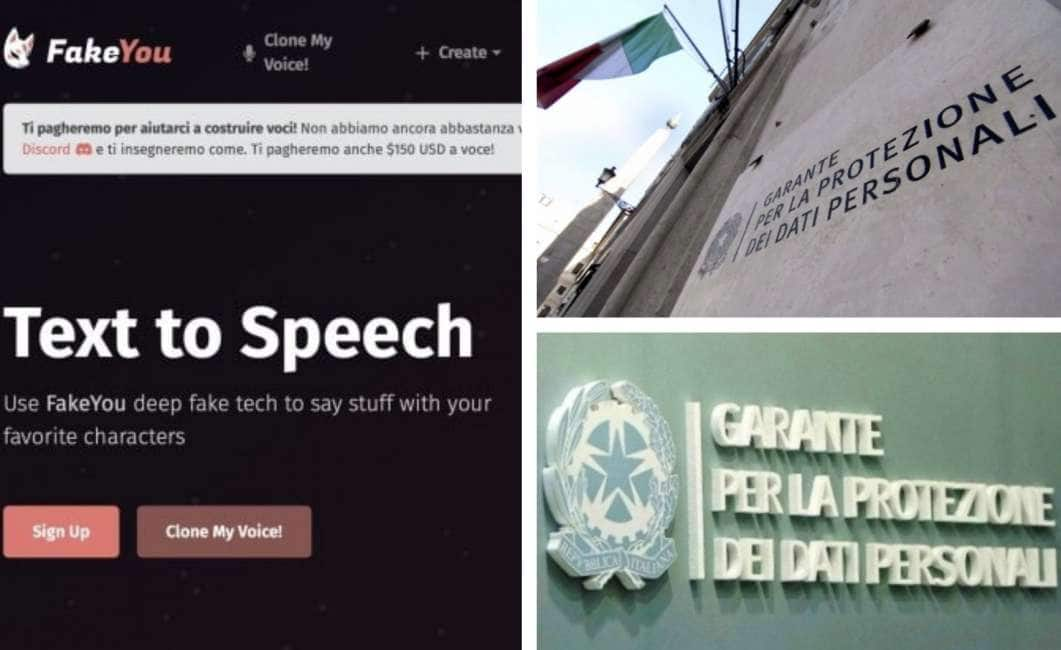

The Italian Data Protection Authority has launched an investigation into FakeYou, an AI app that generates synthetic voices of public figures. The probe follows concerns about potential misuse, including the spread of deepfake audio impersonating politicians, raising risks of privacy violations and misinformation, though no specific harm has yet been reported.[AI generated]

Why's our monitor labelling this an incident or hazard?

The app FakeYou uses AI to generate synthetic voices, which is an AI system. The investigation by the privacy authority is triggered by concerns about potential misuse of personal data and the risk of misleading audio clips that could deceive people. However, the article does not describe any realized harm such as injury, rights violations, or disruption caused by the AI system. The event is about the plausible risks and regulatory response, not about an incident with actual harm. Therefore, this qualifies as an AI Hazard due to the plausible future harm from misuse of AI-generated synthetic voices and personal data, but not an AI Incident yet.[AI generated]