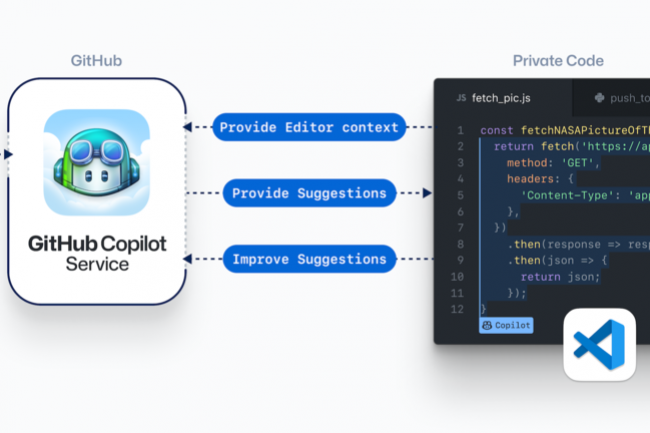

The article explicitly discusses a legal complaint against GitHub Copilot, an AI system, alleging violations of open source licenses and privacy laws. These relate to intellectual property rights and legal obligations, which fall under the definition of harm (c) violations of human rights or breach of obligations under applicable law intended to protect intellectual property rights. However, the complaint is ongoing, and the article does not report that these violations have been legally established or that harm has concretely occurred due to the AI system's malfunction or misuse. Instead, it provides expert legal perspectives and analysis of the complaint's claims and implications. This fits the definition of Complementary Information, as it updates and contextualizes the AI ecosystem and legal responses without describing a new AI Incident or AI Hazard. There is no direct or indirect evidence of realized harm or plausible future harm beyond the legal dispute itself at this stage.