The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

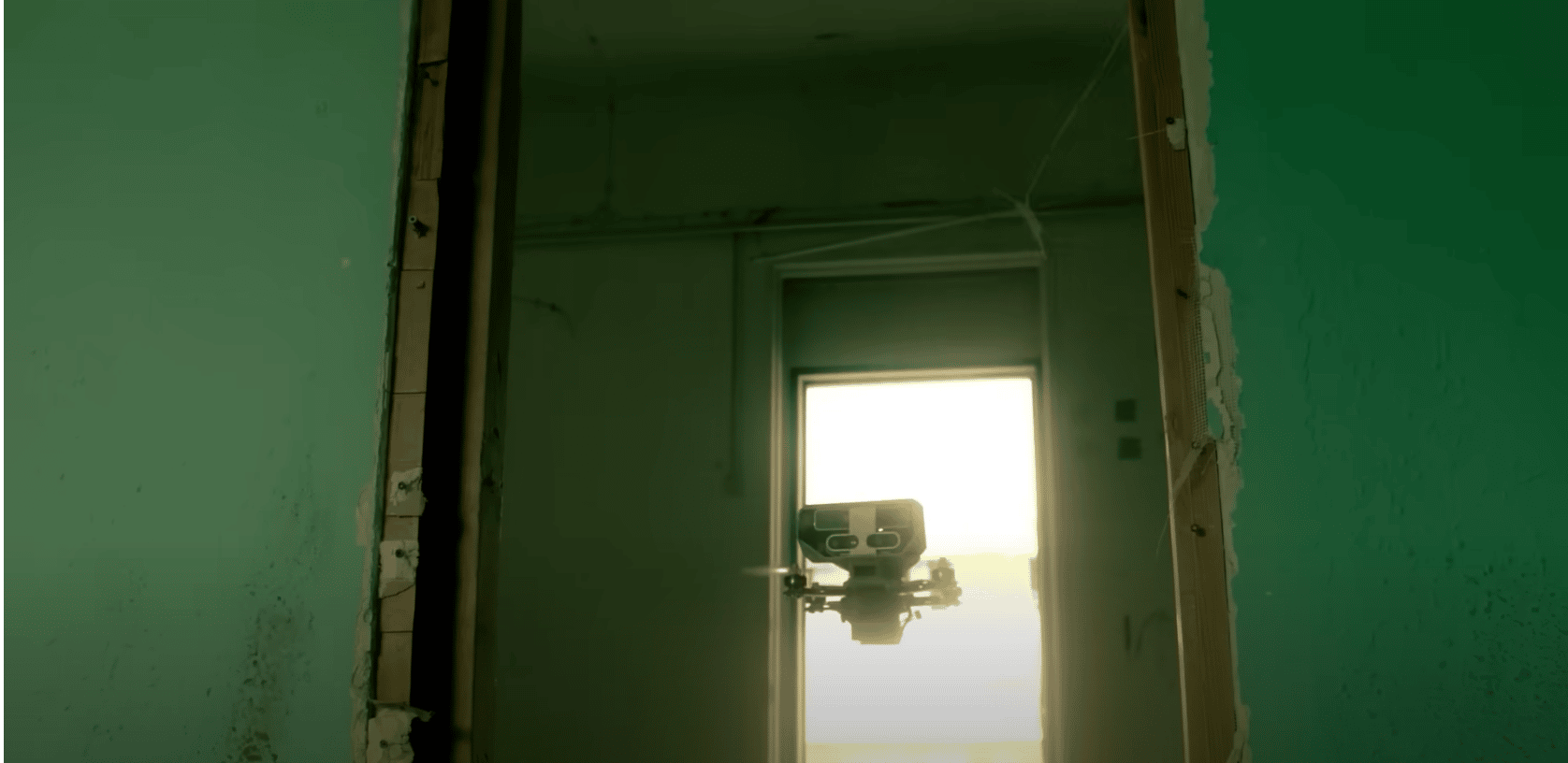

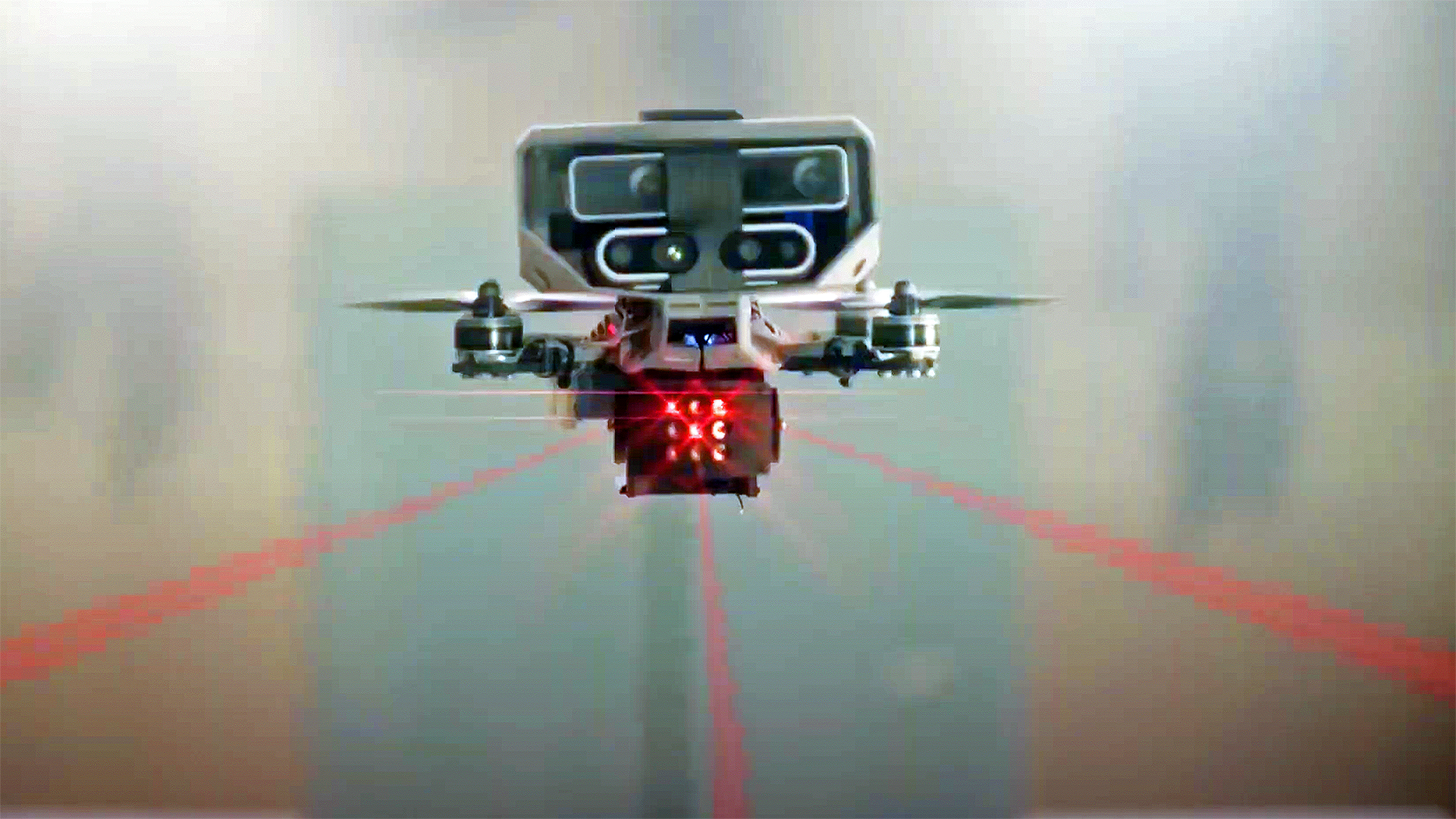

Israeli defense firm Elbit Systems has unveiled the Lanius, a micro-suicide drone equipped with advanced AI for autonomous navigation, mapping, and target identification in urban environments. The drone can distinguish between combatants and civilians, raising concerns about the potential for AI-driven lethal force and future risks of harm.[AI generated]