The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

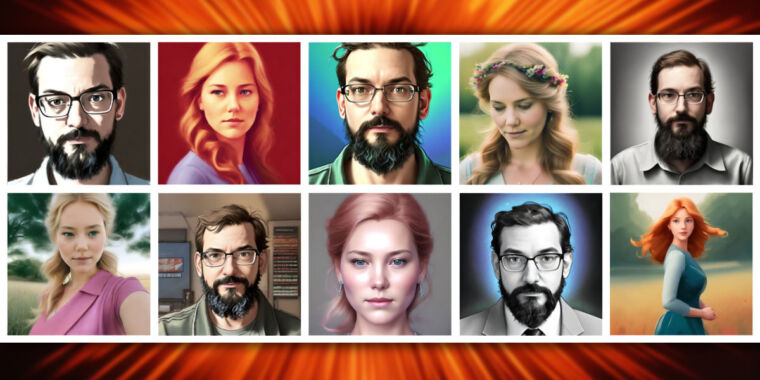

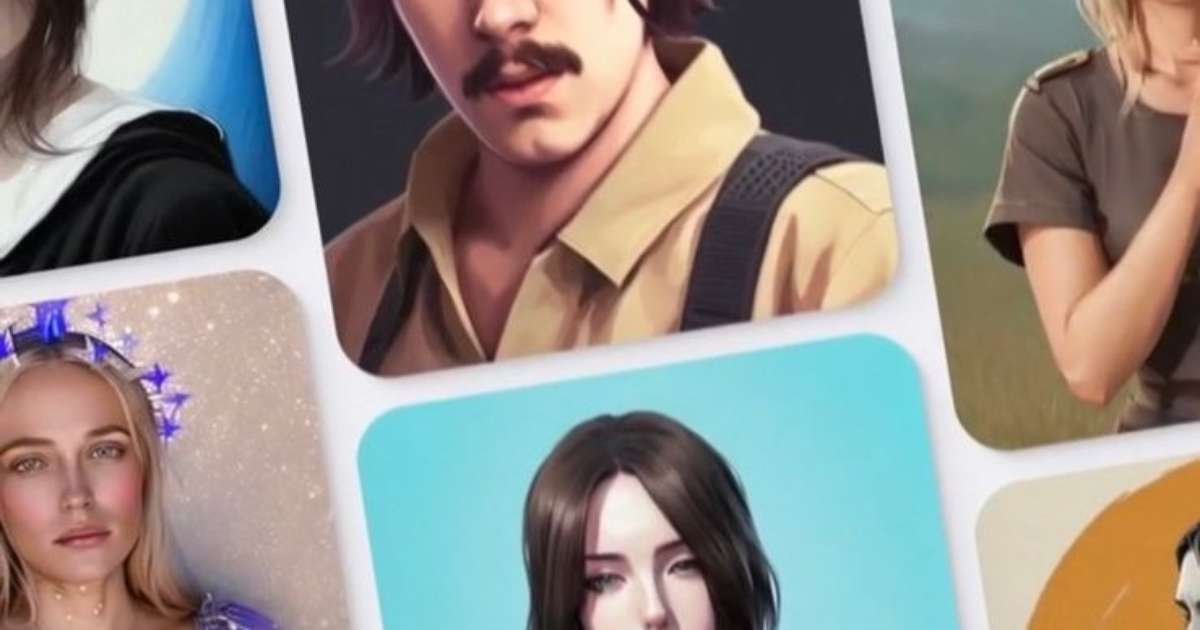

Cybersecurity expert Alexander Forasko warns that AI-powered photo editing apps like Lensa may expose users to digital identity theft. Uploaded personal images can be misused for creating deepfakes, fake accounts, and scams, potentially leading to financial fraud, despite no specific incidents reported yet.[AI generated]

:strip_icc()/i.s3.glbimg.com/v1/AUTH_08fbf48bc0524877943fe86e43087e7a/internal_photos/bs/2022/o/v/bUJNLNQEGKMfICmWCAQA/lensa-2.jpg)