The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

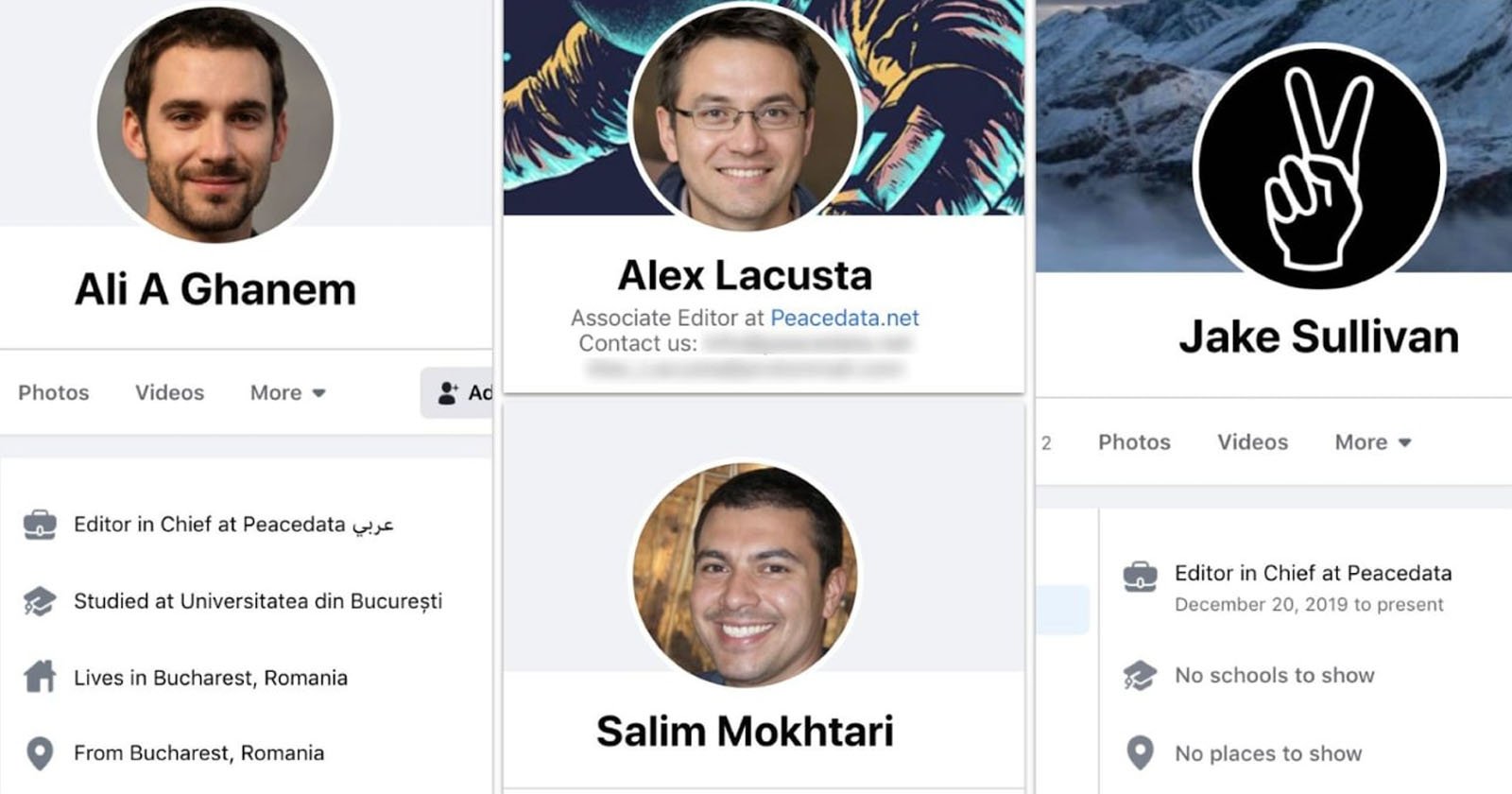

Meta reports a sharp rise in the use of AI-generated fake faces, created with generative adversarial networks (GANs), for fake social media profiles. These lifelike images enable malicious actors to conduct influence operations, spread propaganda, and perpetrate scams, causing widespread harm by manipulating online discourse and deceiving users.[AI generated]

Why's our monitor labelling this an incident or hazard?

The article explicitly states that AI-generated faces are being used by threat actors to run influence operations on social media, spreading propaganda and harassment, which harms communities. The AI system (GANs generating fake faces) is directly involved in enabling these harms. The harm is realized, not just potential, as Meta has taken down over 200 such networks. This fits the definition of an AI Incident because the AI system's use has directly led to harm to communities through misinformation and manipulation.[AI generated]