The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

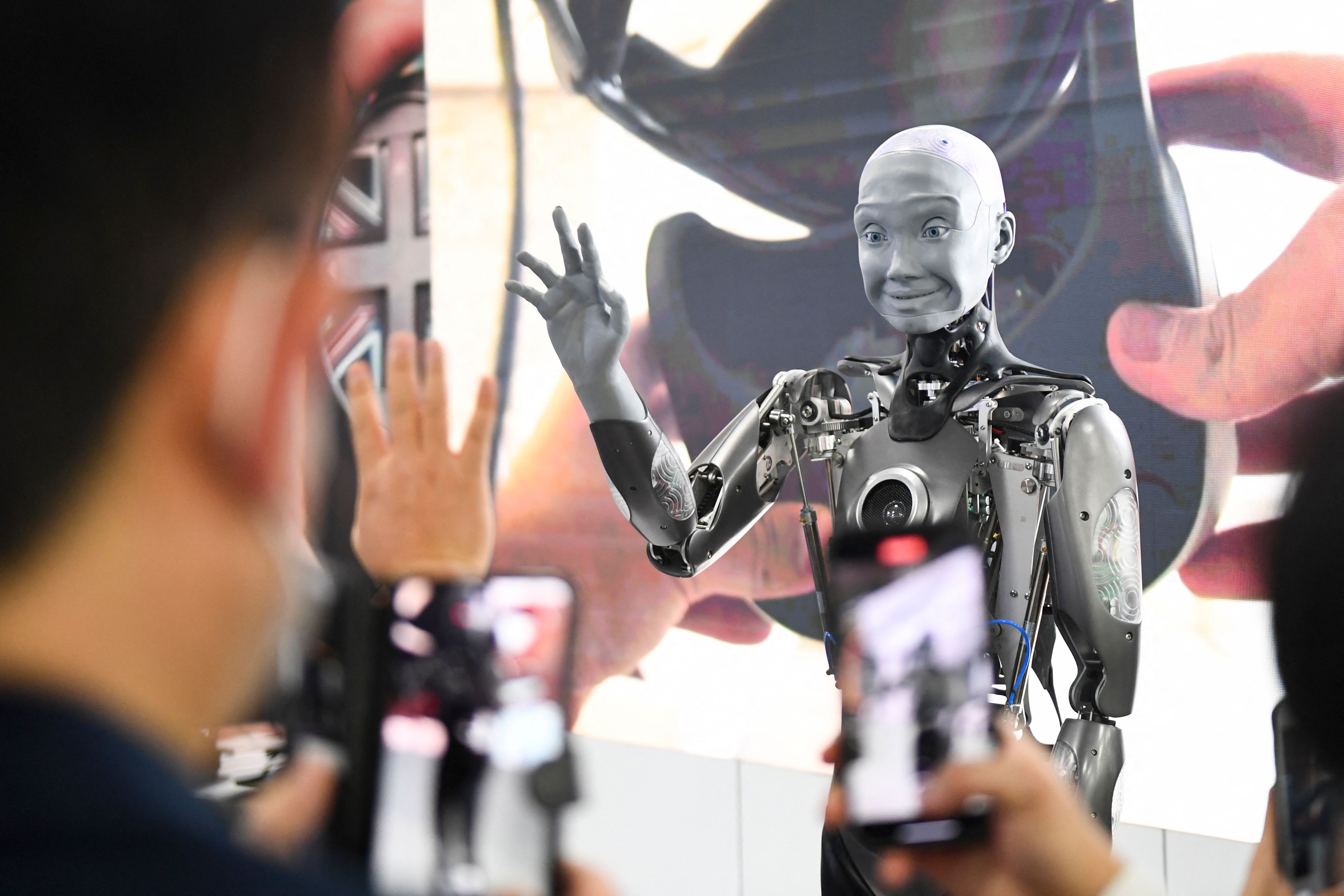

An AI-powered 'robot lawyer' developed by DoNotPay is set to assist a defendant in a real court case by listening to proceedings and providing real-time legal advice via smartphone. This unprecedented use of AI in legal defense raises concerns about the impact on defendants' rights and the fairness of judicial processes.[AI generated]

:max_bytes(150000):strip_icc():focal(749x0:751x2)/robot-lawyer-011023-1-e5fe53d7cce04dcb8deed9690a8f6815.jpg)

.jpg)

:quality(70)/cloudfront-us-east-1.images.arcpublishing.com/metroworldnews/SSS5LYJBIZE3TPV2ASUREY4VCY.jpg)

:format(jpg):quality(99):watermark(f.elconfidencial.com/file/bae/eea/fde/baeeeafde1b3229287b0c008f7602058.png,0,275,1)/f.elconfidencial.com/original/aed/bce/e25/aedbcee25d6aba75ffde62fc4372b2fd.jpg)

:quality(75)/cloudfront-us-east-1.images.arcpublishing.com/elcomercio/LV4E7VLDFZAAVAVMRNTZUXNBQ4.jpg)

:quality(75)/cloudfront-us-east-1.images.arcpublishing.com/elcomercio/YMKESVBFCBBIXAIBLSC7XEMP7M.jpg)

:quality(70)/cloudfront-us-east-1.images.arcpublishing.com/elfinanciero/JEXLXXHNPNAJ3B7FJZ34N6T3TI.jpg)

:format(jpg):quality(99):watermark(f.elconfidencial.com/file/bae/eea/fde/baeeeafde1b3229287b0c008f7602058.png,0,275,1)/f.elconfidencial.com/original/e81/926/40e/e8192640e62281d731ef6b38ba5d1046.jpg)

:quality(75)/cloudfront-us-east-1.images.arcpublishing.com/elcomercio/B6MU2B52JBBABP7EWINHKZNXOA.jpg)