The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

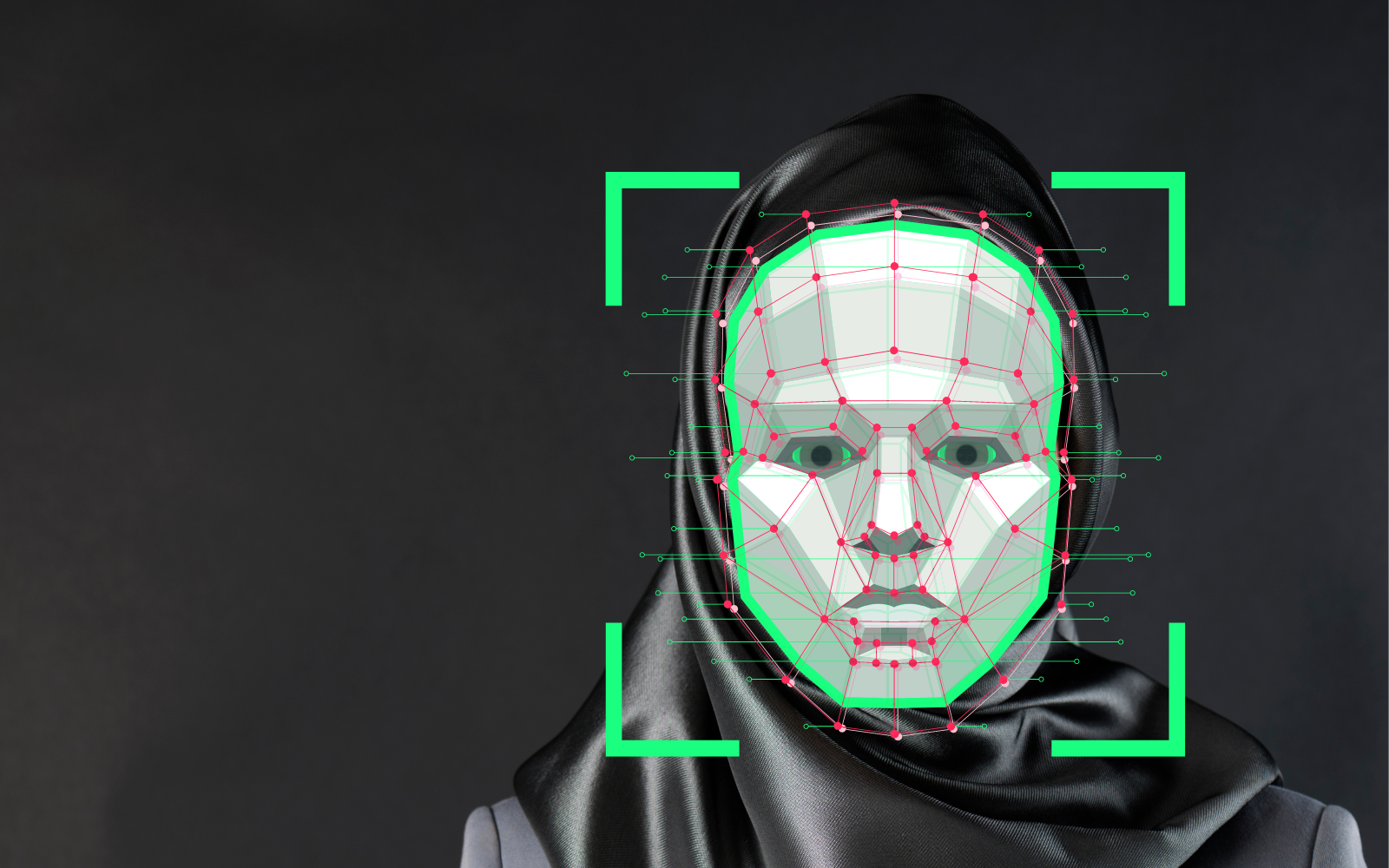

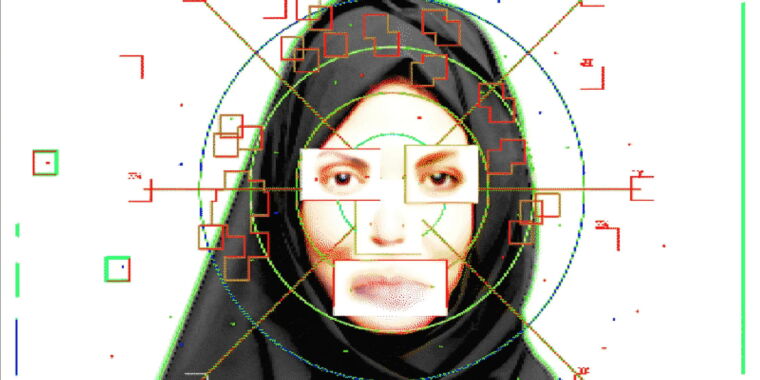

Iranian authorities are reportedly deploying AI-powered facial recognition technology to identify and penalize women violating strict hijab laws. This system enables remote surveillance, leading to fines, arrests, and human rights violations, as women are targeted and punished without direct police interaction, raising serious concerns about privacy and oppression.[AI generated]