The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

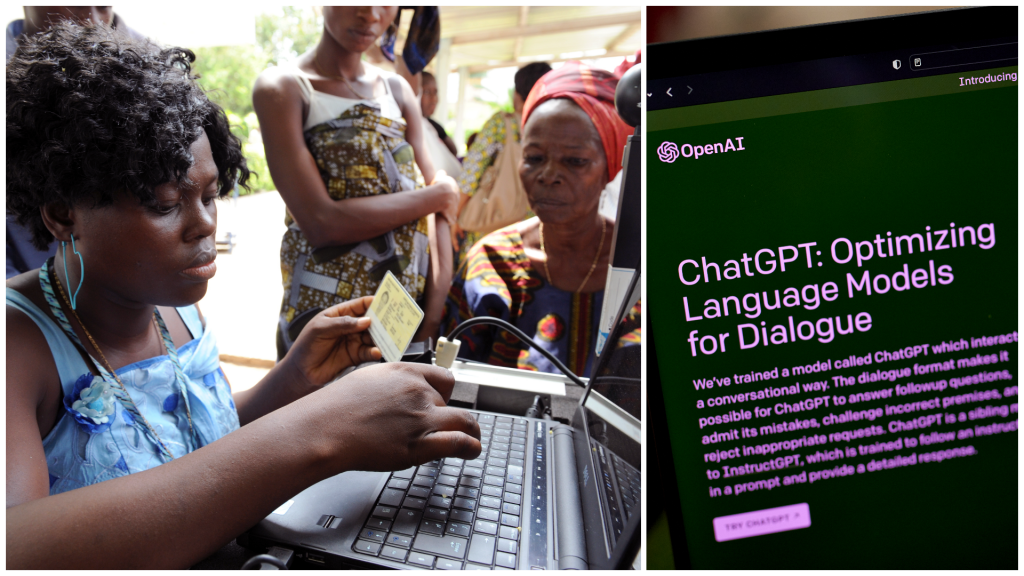

OpenAI outsourced the labeling of toxic and disturbing content for ChatGPT's training to Kenyan workers earning less than $2 per hour. Exposed to graphic material, these workers suffered psychological harm and poor working conditions, raising concerns about labor exploitation and the human cost of developing AI safety systems.[AI generated]

:quality(75)/cloudfront-us-east-1.images.arcpublishing.com/elcomercio/ELCKXJ4GVBC33E2B7UBJKS6SYQ.jpg)