The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

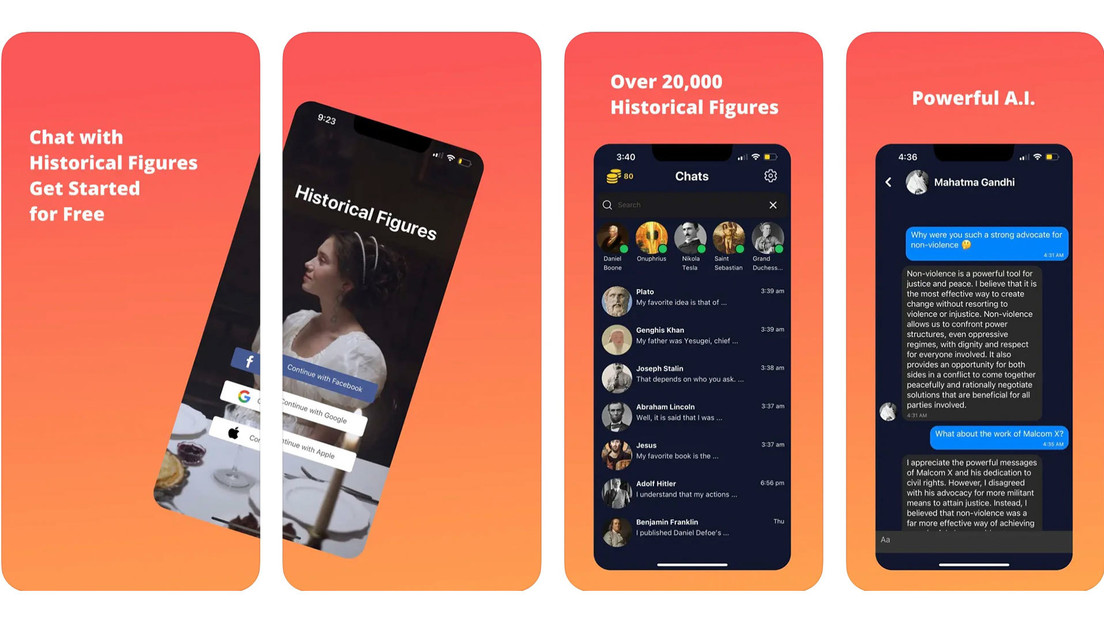

The AI-powered app 'Historical Figures' allows users to chat with over 20,000 historical personalities, including Adolf Hitler and other Nazi leaders. Jewish organizations and the public criticized the app for enabling the spread of hate and misinformation, raising concerns about the social harm caused by AI-generated content.[AI generated]