The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

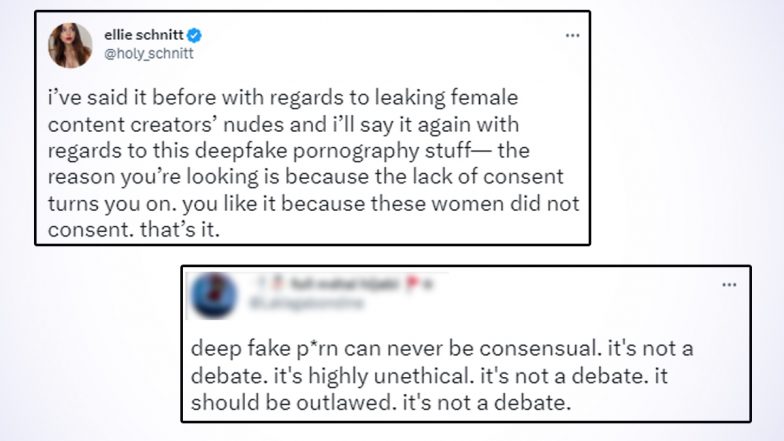

Twitch streamer Brandon "Atrioc" Ewing was caught viewing AI-generated deepfake pornographic videos of female streamers, created without their consent. The incident sparked public outrage, highlighting the harm and rights violations caused by AI systems used to produce non-consensual explicit content, and led to significant reputational damage and emotional distress for the victims.[AI generated]