The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

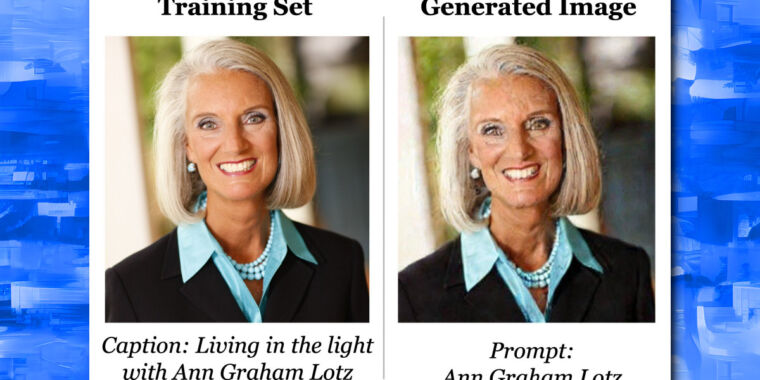

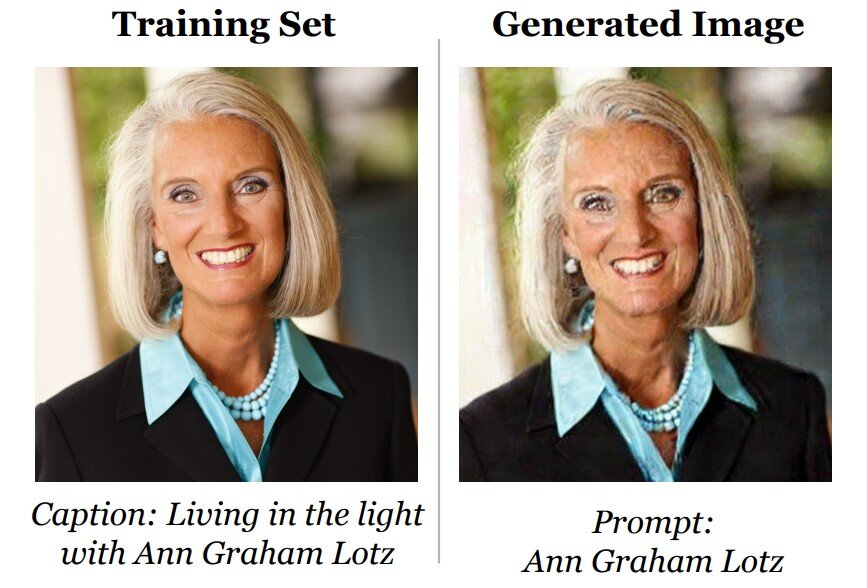

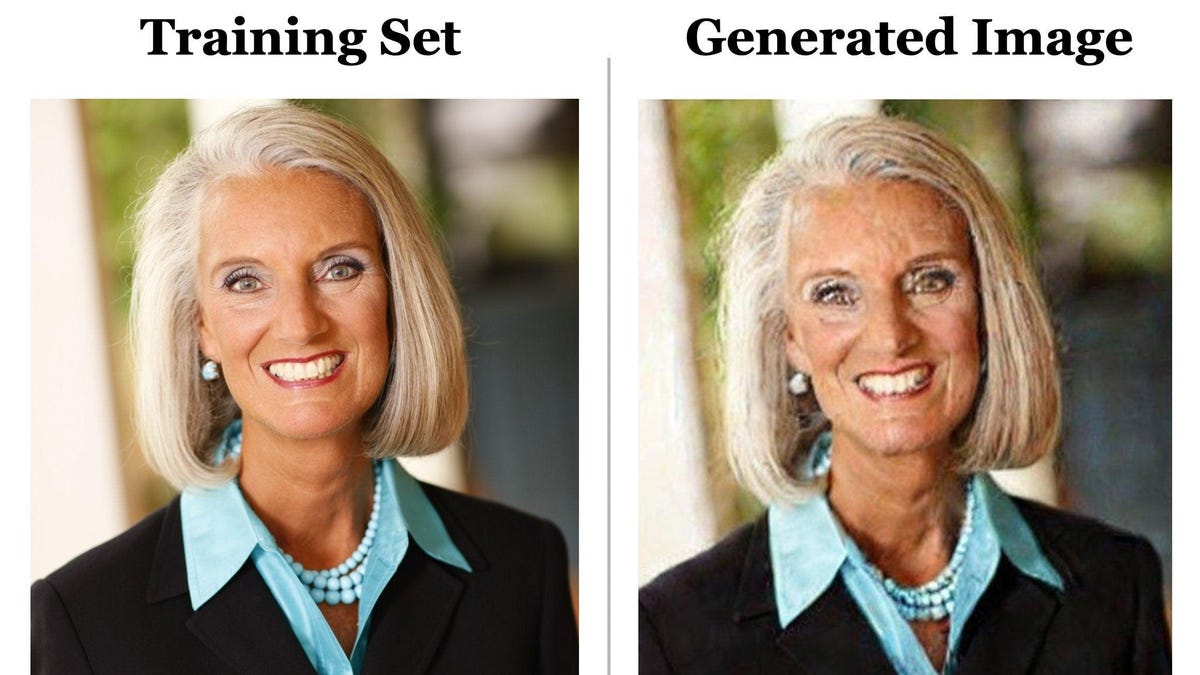

Researchers found that AI image generators like Stable Diffusion and Imagen can produce non-consensual pornographic images, exact replicas of real people's photos, and copyrighted works, including child exploitation material. These outputs violate privacy, consent, and intellectual property rights, causing direct harm to individuals and artists.[AI generated]

Why's our monitor labelling this an incident or hazard?

The event involves AI systems (diffusion models) that have been shown to memorize and reproduce copyrighted images, which constitutes a violation of intellectual property rights, a form of harm under the AI Incident definition (c). The reproduction of copyrighted photos by the AI system is a direct consequence of its development and use, leading to realized harm to copyright holders. Therefore, this qualifies as an AI Incident rather than a hazard or complementary information.[AI generated]