The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

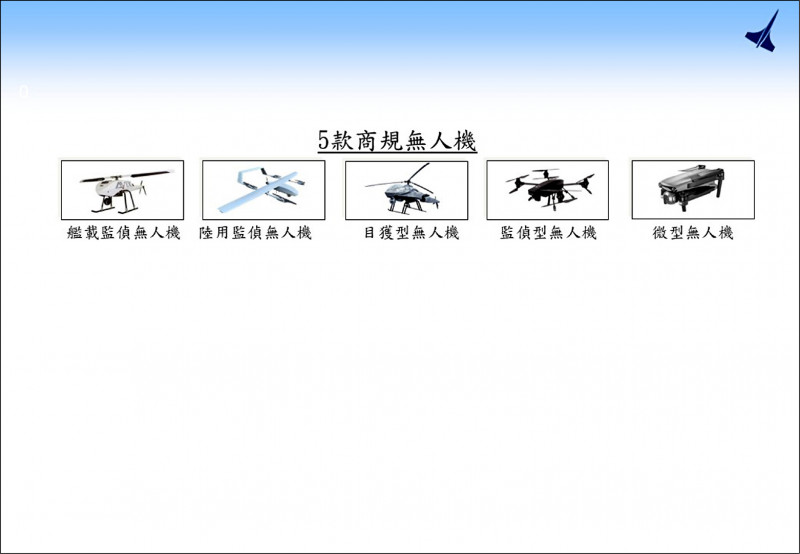

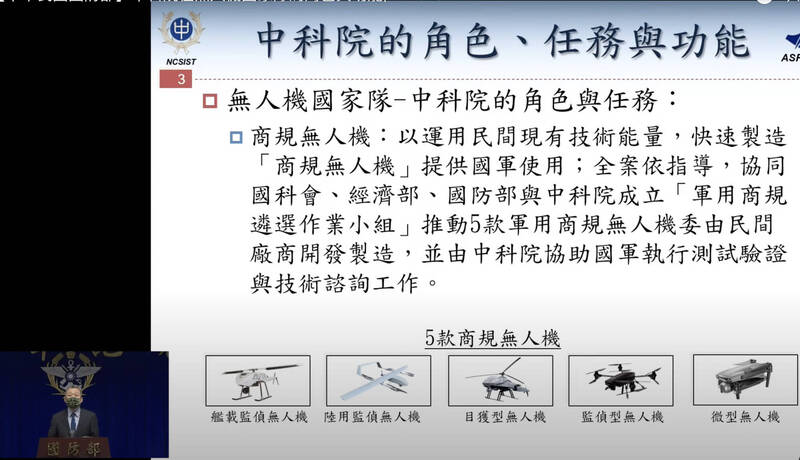

Taiwan's National Chung-Shan Institute of Science and Technology (NCSIST) and the Ministry of National Defense are mass-producing AI-enabled military drones for reconnaissance, attack, and cognitive warfare. These drones, capable of autonomous operations and real-time data processing, are being deployed for both direct combat and psychological operations, raising significant risks of AI-driven harm in conflict scenarios.[AI generated]