The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

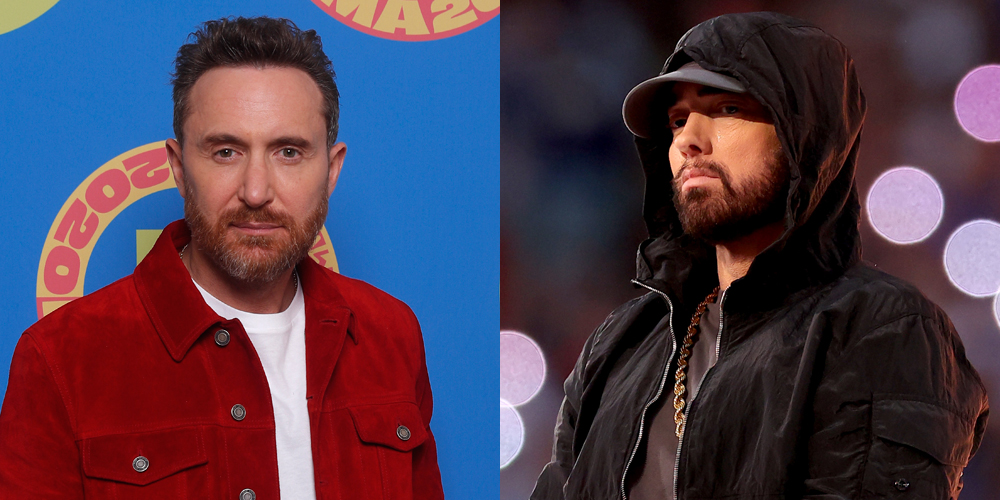

David Guetta used AI tools to generate lyrics and a deepfake of Eminem's voice for a new song, which he played at a live show. The act, done without Eminem's consent, sparked debate over AI's potential to infringe on artists' rights and mislead audiences, highlighting ethical and legal risks.[AI generated]