The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

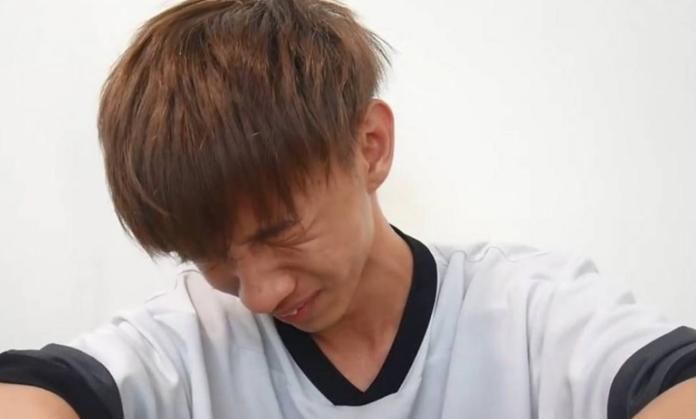

Taiwanese influencer Zhu Yuchen ('Xiao Yu') and his assistant used AI deepfake technology to superimpose faces of celebrities and public figures onto pornographic videos without consent, profiting over NT$13 million. The scheme harmed 119 victims, resulting in criminal convictions and multiple civil compensation orders, highlighting significant privacy and reputational violations caused by AI misuse.[AI generated]

Why's our monitor labelling this an incident or hazard?

The event explicitly involves the use of deepfake AI technology to manipulate videos by swapping faces without consent, resulting in serious harm to the victims' reputation, privacy, and personal rights. The harm is realized and legally recognized, with court rulings ordering compensation. The AI system's use is central to the harm, fulfilling the criteria for an AI Incident under violations of human rights and harm to individuals. Therefore, this is classified as an AI Incident.[AI generated]