The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

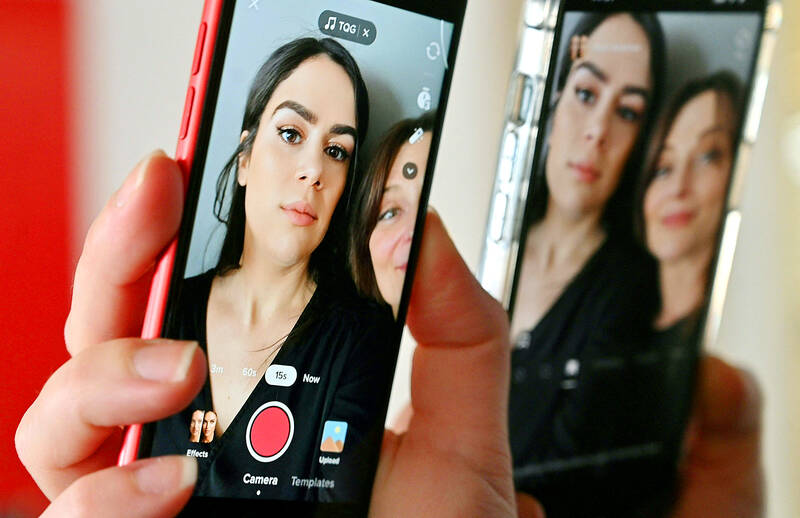

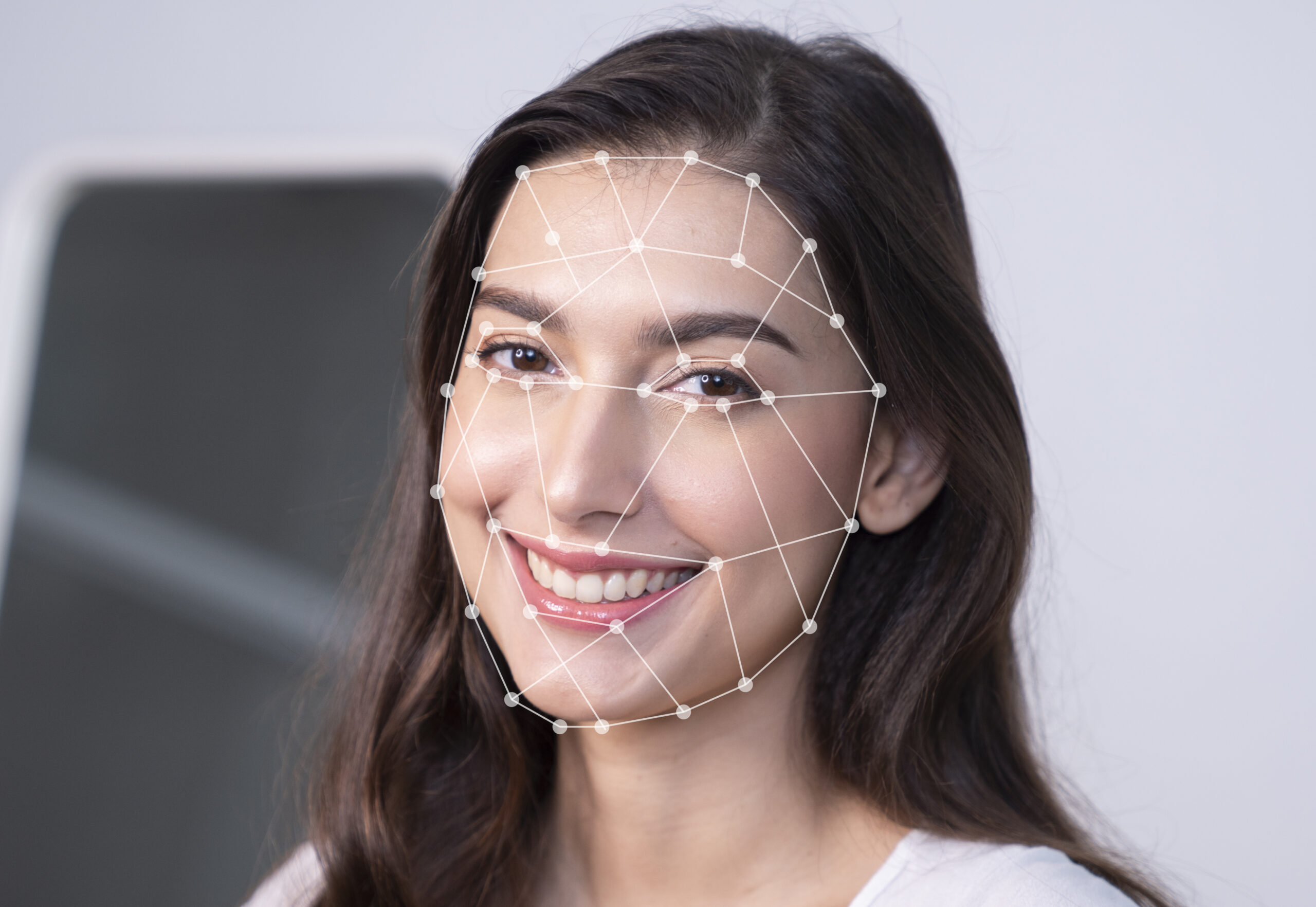

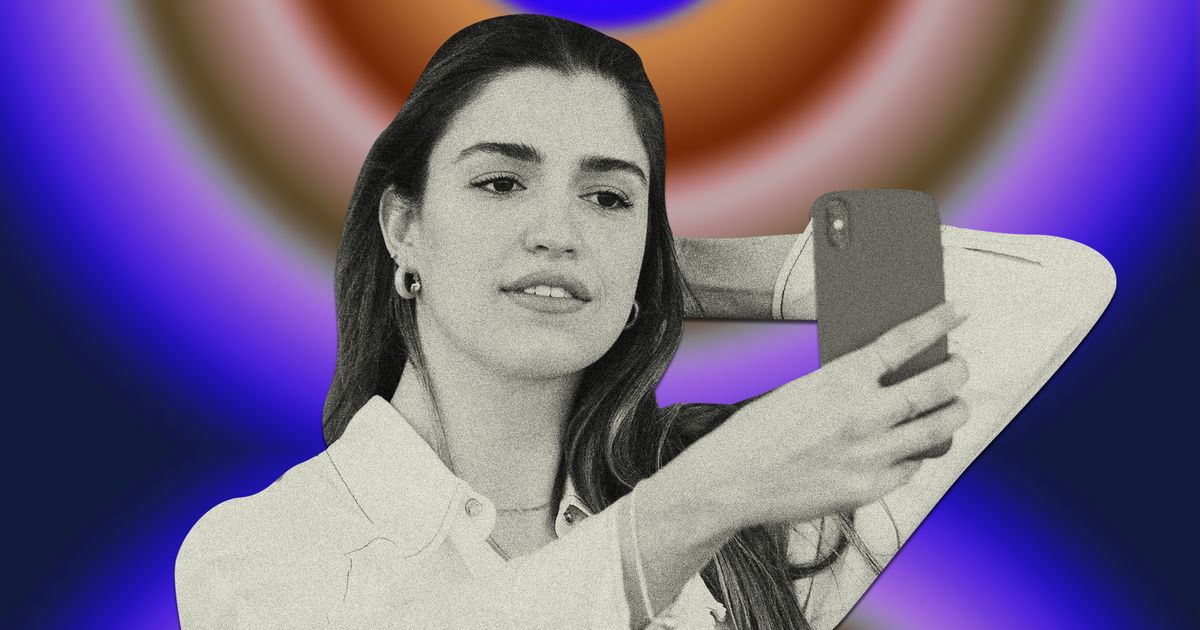

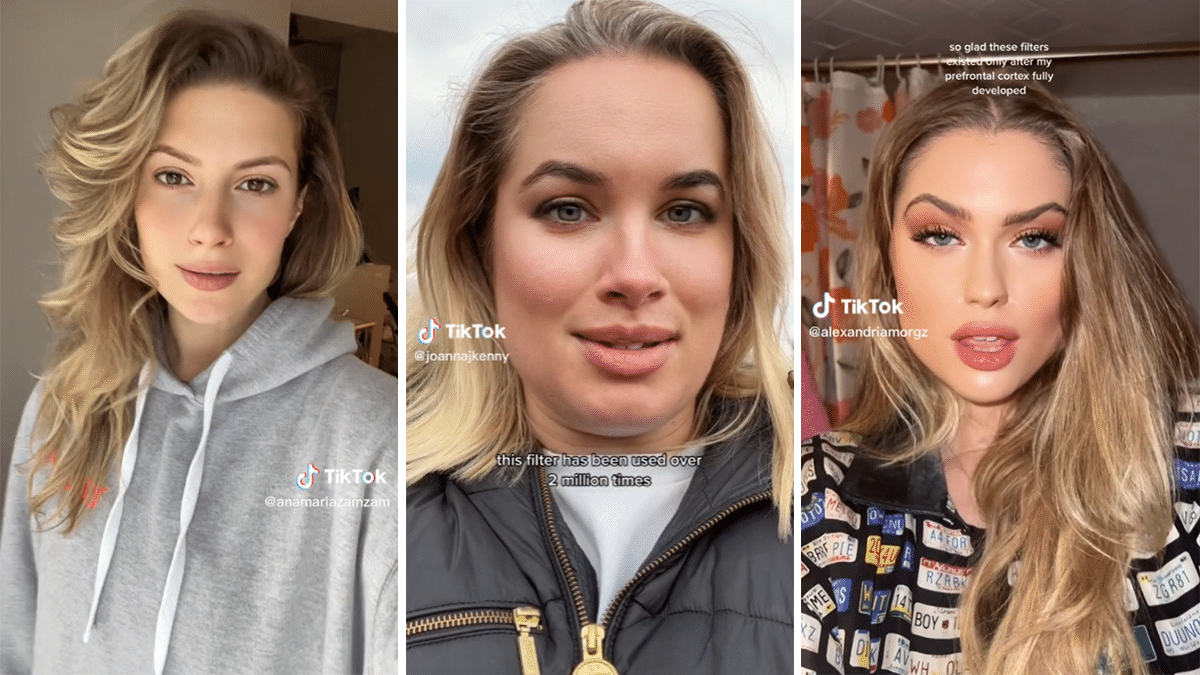

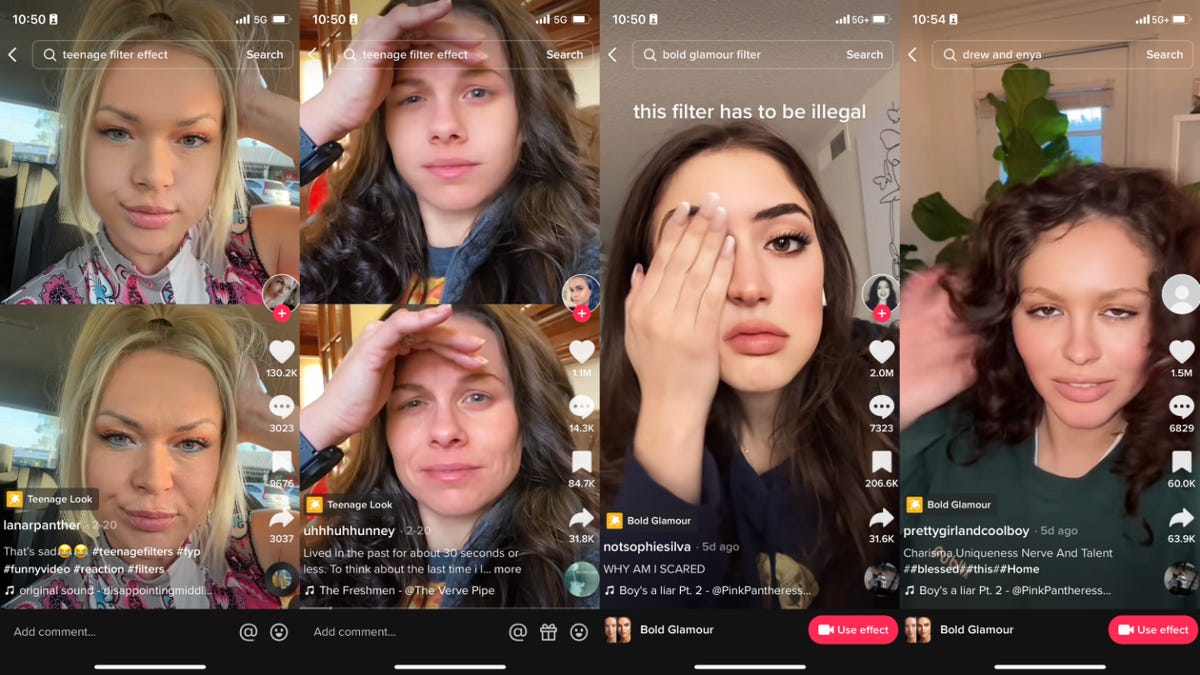

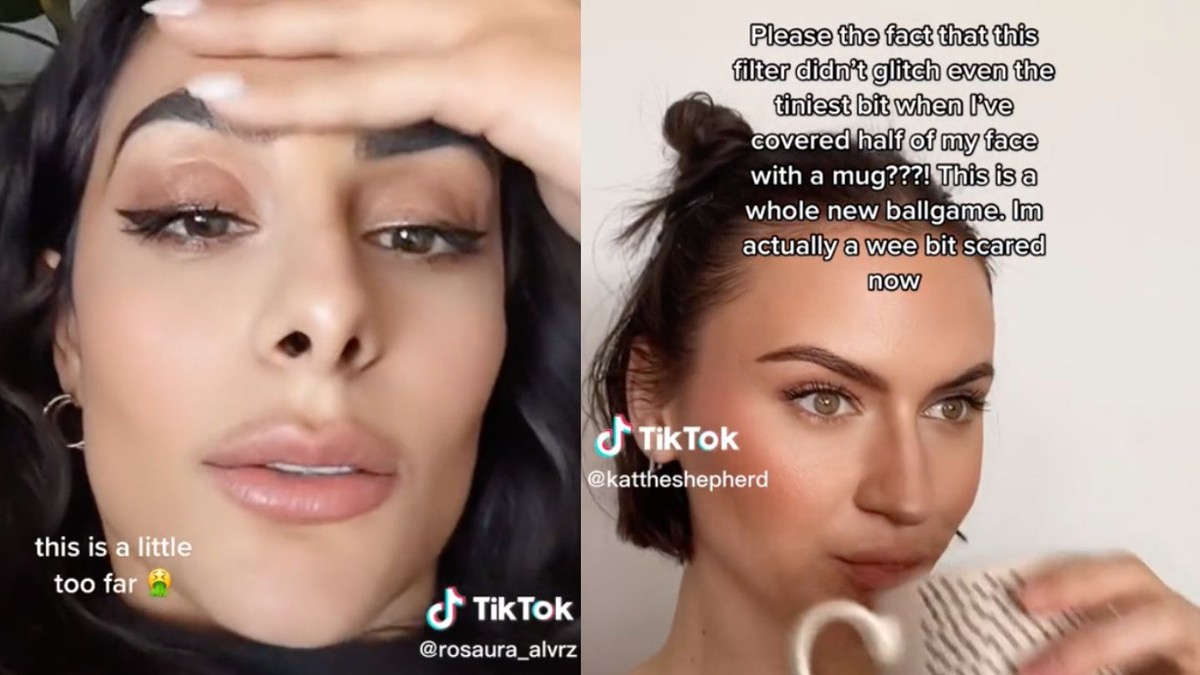

TikTok's 'Bold Glamour' AI-powered beauty filter, which realistically alters users' facial features, has sparked widespread concern over its psychological impact. Users, especially teenagers and young women, report increased insecurity, body dysmorphia, and pressure to seek cosmetic surgery, highlighting the filter's role in perpetuating harmful beauty standards and mental health issues.[AI generated]

:format(webp)/https://www.thestar.com/content/dam/thestar/life/2023/03/04/tiktoks-bold-glamour-filter-is-so-good-it-might-be-bad-for-you-heres-why-experts-are-concerned/a_tiktok_user_joanna_kenny.jpg)

:quality(75)/cloudfront-us-east-1.images.arcpublishing.com/elcomercio/GE3DAMBNGAZS2MBTKQYDAORRGY.jpg)

/cloudfront-us-east-1.images.arcpublishing.com/artear/KFFJLNIMC5GWDL3PQBBGBXK7BI.png)

/cloudfront-us-east-1.images.arcpublishing.com/prisaradioco/E2XU3PLEHJFFROBZ6ABAJ5NR7I.jpg)

/https://assets.iproup.com/assets/jpg/2023/03/33850_landscape.jpg)

:quality(70)/cloudfront-us-east-1.images.arcpublishing.com/lanacionpy/UTHL4HMD4VGSLLP34LTZD6E54U.webp)