The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

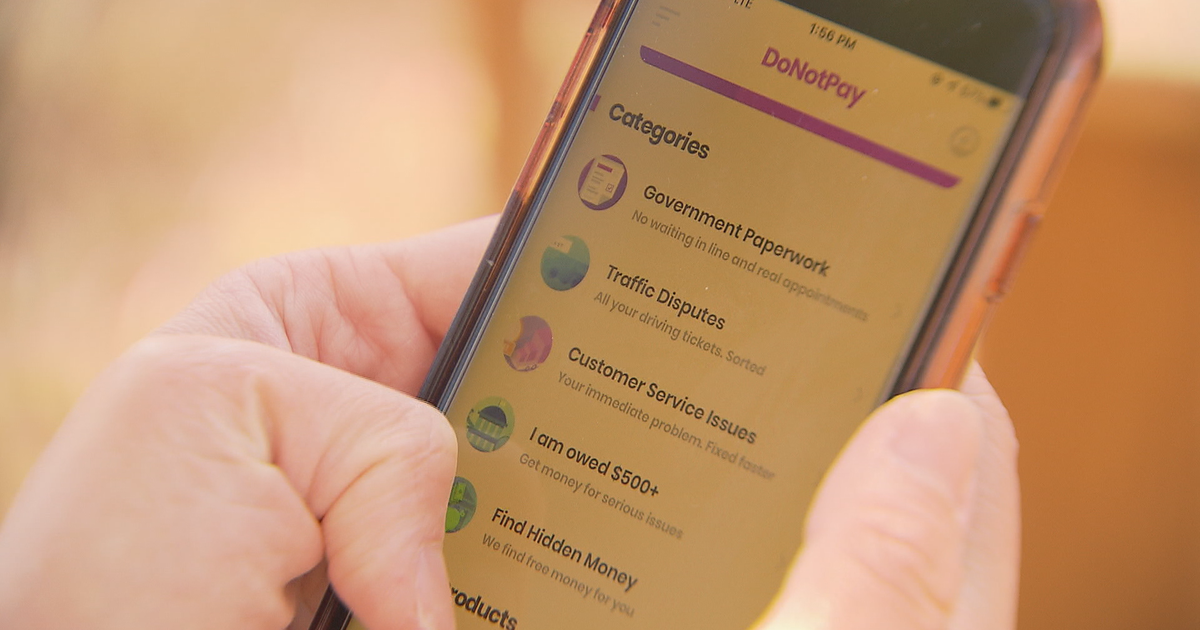

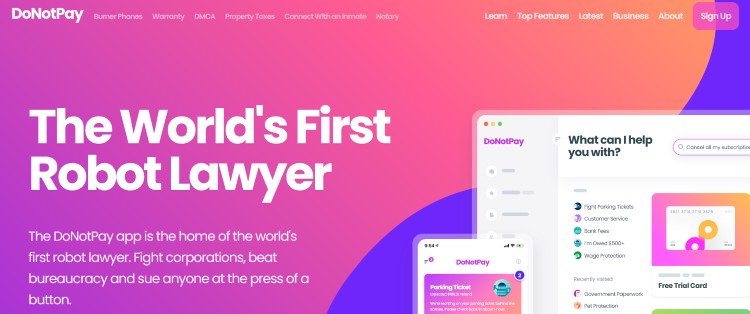

DoNotPay, an AI-powered legal chatbot, faces a class-action lawsuit alleging it practiced law without a license and provided substandard legal documents, causing harm to users. Plaintiffs claim the AI system misrepresented itself as a lawyer, resulting in ineffective legal assistance and potential violations of users' legal rights.[AI generated]

:quality(75)/cloudfront-us-east-1.images.arcpublishing.com/elcomercio/YNYAGIBRFRGSDFLEYV4TOA7RCU.jpg)