The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

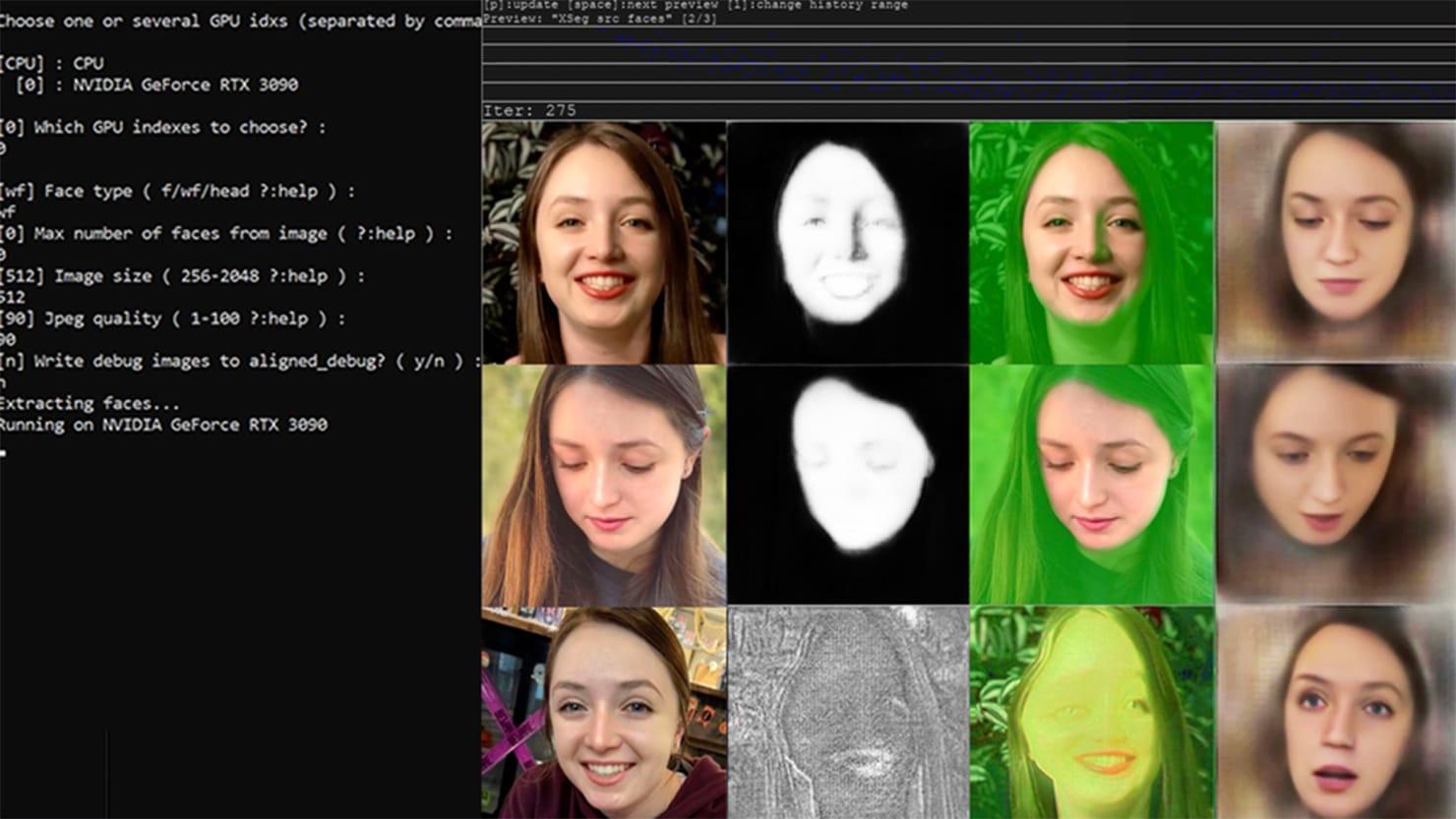

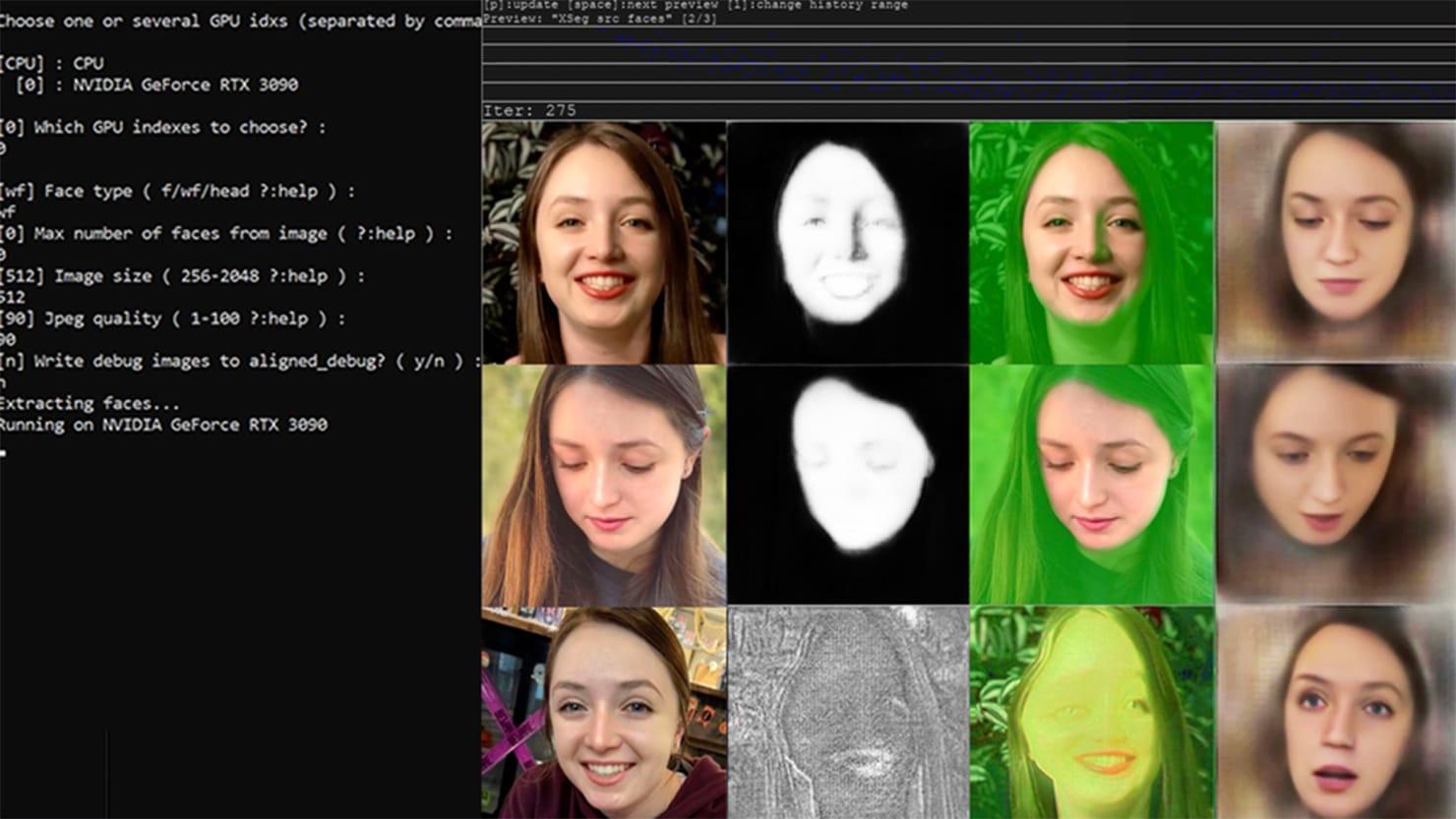

AI-powered deepfake technology is being used to create nonconsensual pornographic videos, targeting both celebrities and ordinary individuals. Victims, like college student Taylor Klein, suffer emotional distress, privacy violations, harassment, and threats to safety. The proliferation of cheap deepfake apps has made this abuse widespread, highlighting serious risks to personal rights and consent.[AI generated]

Why's our monitor labelling this an incident or hazard?

The event involves the use of AI deepfake technology to create nonconsensual pornographic videos, which is a clear violation of privacy and consent rights, thus a breach of fundamental human rights. The harm is realized and ongoing, with documented cases of emotional and reputational damage to victims. The AI system's role is pivotal as it enables the creation of realistic fake videos that would not be possible otherwise. The article also highlights the proliferation and increasing demand for such AI-generated content, confirming the direct link between AI use and harm. Hence, this is classified as an AI Incident.[AI generated]