The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

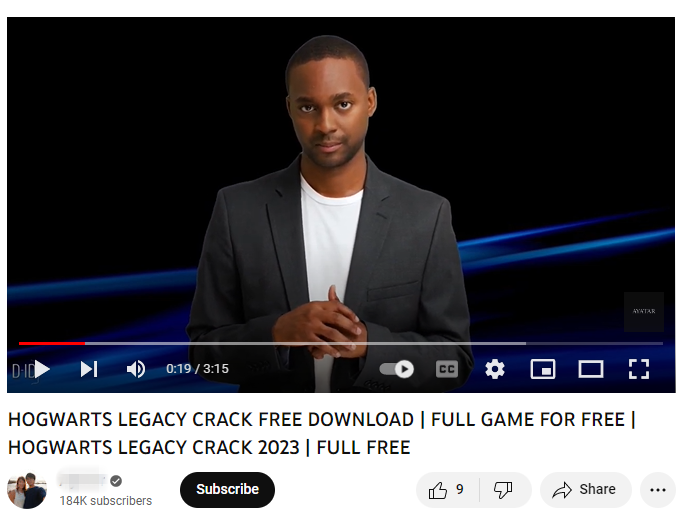

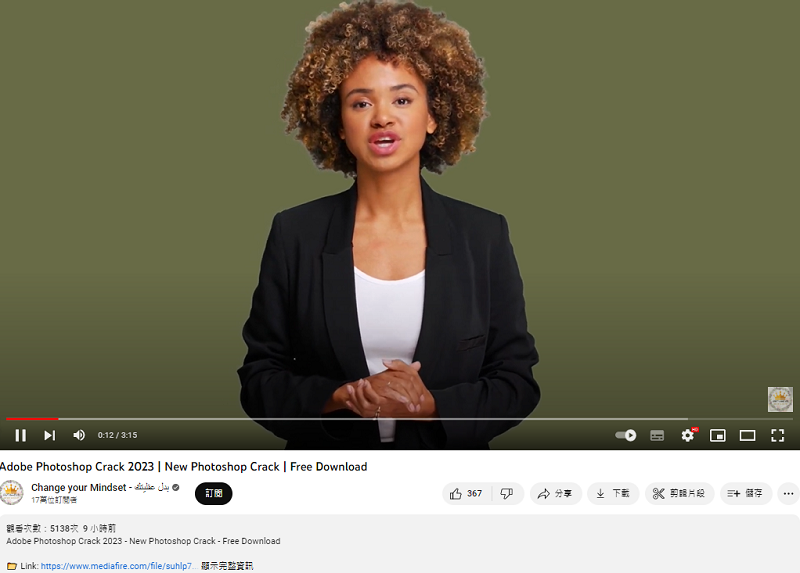

Cybercriminals are increasingly using AI-generated personas and avatars in YouTube videos to trick users into downloading malware disguised as cracked software. This tactic, which has surged 200-300% month-on-month, enables the theft of personal and financial data, directly harming individuals through AI-enabled deception.[AI generated]