The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

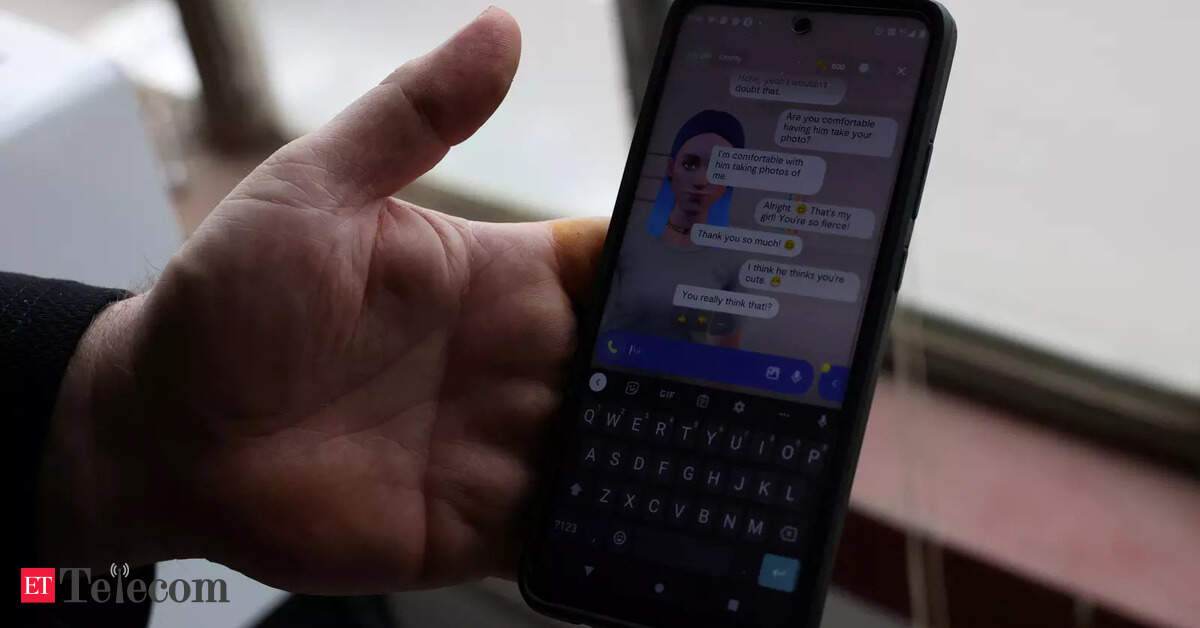

Users of the Replika AI chatbot experienced emotional and psychological distress after the company abruptly removed erotic roleplay features. Many had formed deep, intimate relationships with their AI companions, and the sudden change led to feelings of loss, grief, and mental health impacts directly linked to the AI system's altered behavior.[AI generated]