The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

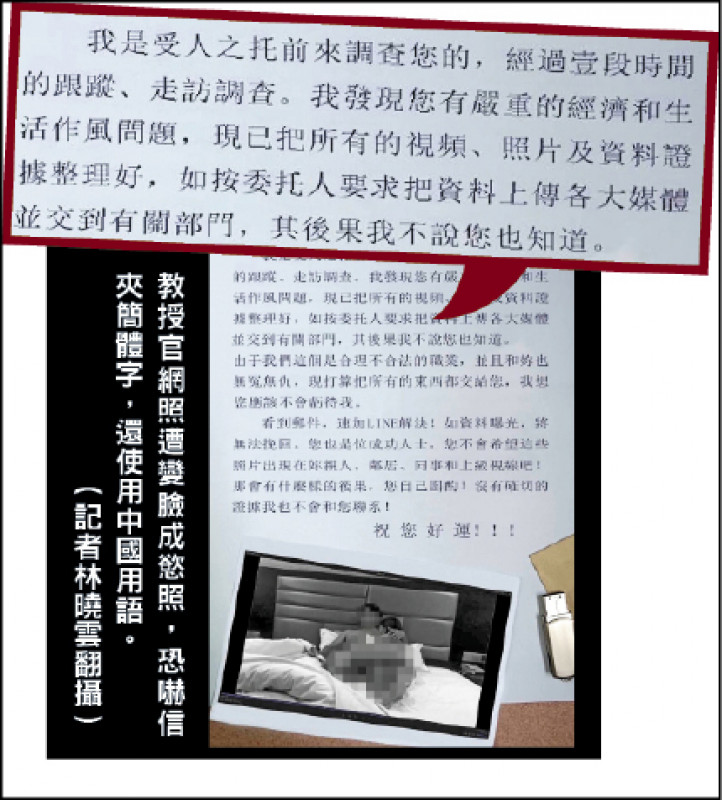

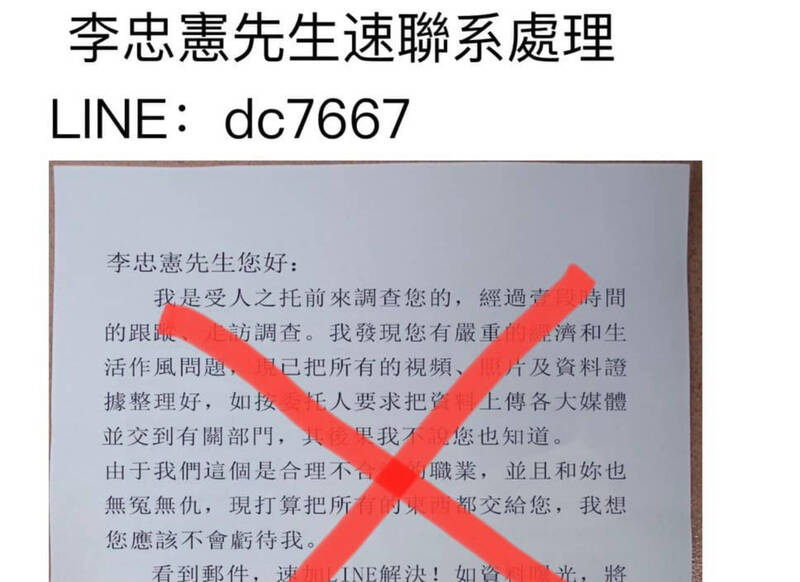

Multiple professors at Taiwanese universities were targeted by extortion emails containing AI-generated deepfake explicit images with their faces. The images, created by replacing faces on stock photos, were used to threaten reputational harm unless payment was made. The incidents caused psychological distress and prompted police investigations and institutional warnings.[AI generated]